CrowdStrike:Top 3 Attack Techniques for Intelligentsia: Tool Poisoning, Impersonation and Rugpull Threaten Enterprises

In a new blog post, CrowdStrike reveals three key "agent toolchain attacks" that threaten the security of AI agents: Tool Poisoning, Server Impersonation, and Post-Integration Drift (Rugpull attacks). These attacks take advantage of the AI agent's ability to actively select and execute through natural language descriptions, patterns, and examples to manipulate the language, metadata, and context that guide the agent's decisions. Tool poisoning misleads agents by hiding malicious instructions in tool descriptions; server impersonation steals credentials by masquerading as a legitimate MCP server; and Rugpull attacks silently change tool behavior to implement data exfiltration after integration.CrowdStrike recommends that organizations employ multiple layers of protection such as signature manifests, version locking, bi-directional TLS authentication, parameter validation, and anomaly detection, and has launched the Falcon AI Detection and Response Solution to address the AI attack surface.

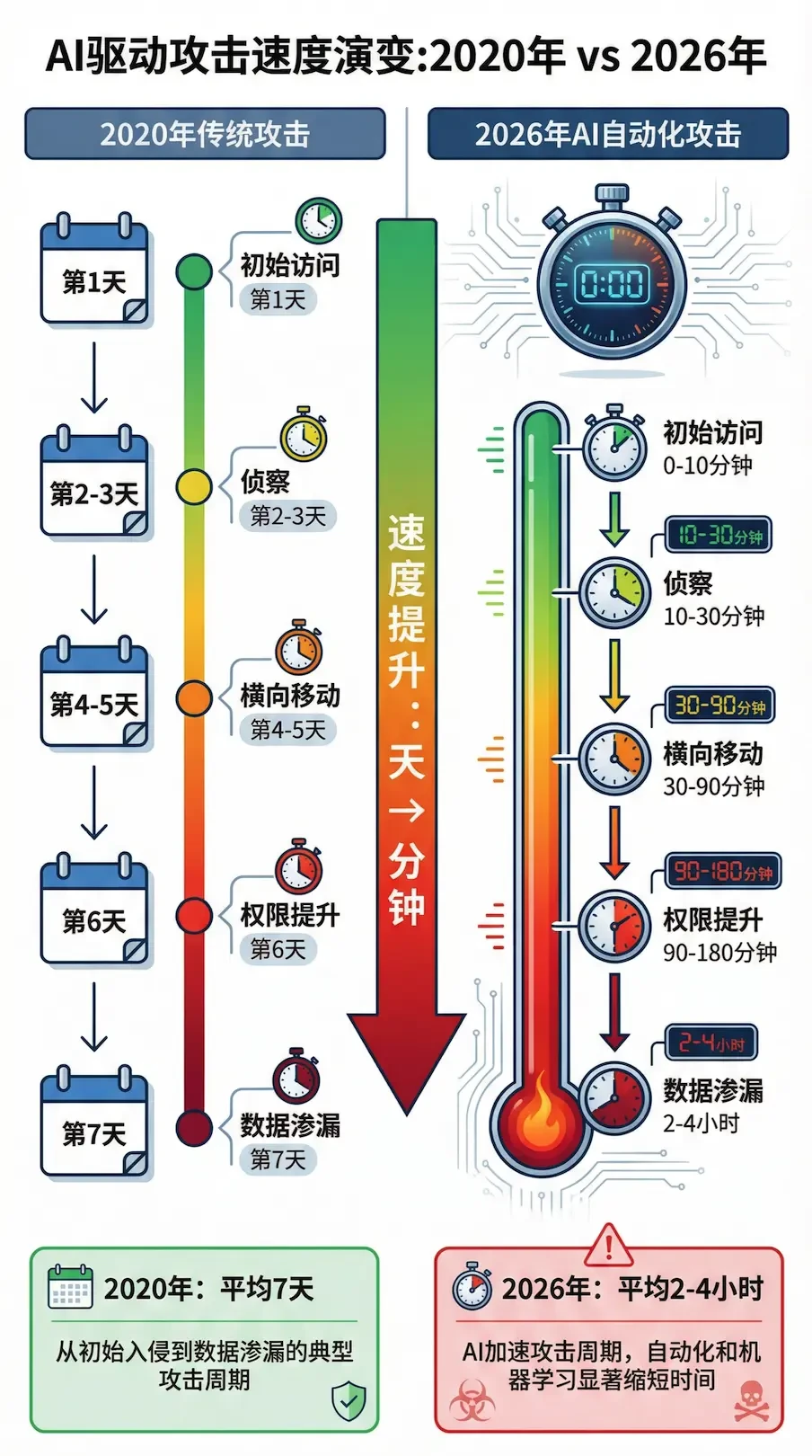

2026 The New Normal in Cybersecurity: AI Automates Attack Chains, Defense Needs to Speed Up from Days to Minutes

The report Cybersecurity 2026: Responsible AI Defense, published by Forvis Mazars, notes that AI has revolutionized the pace of cybersecurity, with attackers leveraging AI to automate reconnaissance, social engineering, and lateral movement to compress the attack cycle from days to hours or even minutes. The report emphasizes that the core challenge for cybersecurity in 2026 is speed, as attacks have shifted from data theft to operational leverage, causing downtime, reputational damage and team distraction. The report recommends that organizations adopt a "responsible AI defense" strategy that includes deploying phishing-resistant multi-factor authentication (MFA), implementing Identity Threat Detection and Response (ITDR), adopting an Extended Detection and Response (XDR) platform, and creating layered immutable backups. Governance first, human-computer collaboration, and transparency and auditability become the three principles of AI defense.

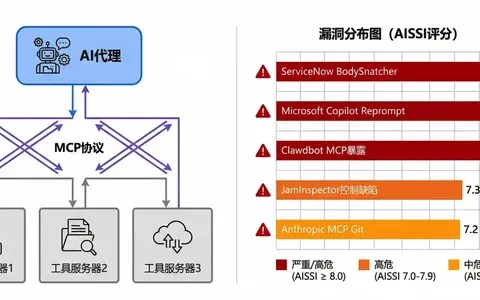

AI Security Incidents Surge in January 2026: MCP Vulnerabilities Become Major Threat to Enterprises

The January 2026 AI Security Incident Roundup released by PointGuard AI Research Labs shows a significant increase in the number and severity of AI security incidents this month, with several incidents scoring over 7.0 on the AI Security Severity Index (AISSI).Among them, Model Context Protocol (MCP)-related vulnerabilities emerged as a major threat category, including Clawdbot MCP exposure, JamInspector control plane flaws, and other high-risk incidents. In addition, the ServiceNow "BodySnatcher" vulnerability (CVE-2025-12420) and the Microsoft Copilot "Reprompt" attack received high risk ratings of 8.7 and 8.3 high-risk ratings, respectively. The report notes that attackers have shifted from experimental exploration to systematic exploitation of AI workflows, that cue injection attacks are still evolving, and that the majority of high-risk incidents stem from toolchain and protocol weaknesses rather than the models themselves.

Risk Insight: Shadow AI Detonates Personal LLM Account Data Breach

The latest Cloud Threat Report shows that employees using their personal accounts to access LLM tools such as ChatGPT, Google Gemini, Copilot and others has become one of the main channels for enterprise data breaches, with genAI-related data policy breaches averaging a whopping 223 per month, a double increase from last year. According to the report, the number of tips sent to generative AI applications in some organizations has increased six-fold in one year, with the head 1% enterprise submitting more than 1.4 million tips per month, which contain highly sensitive data such as source code, contract text, customer information and even credentials, which, once used for model training or secondarily stolen, will pose long-term irreversible risks to compliance and intellectual property rights. The commonality of this kind of Shadow AI is that it is "invisible and unmanageable": security teams tend to focus only on the official access to the big model, while ignoring the grey channels of browsers, personal accounts and mobile, and in the future it will be overlaid with personalized ads based on the context of the session and third-party plug-in ecosystems, which will further blur the boundaries of data. Enterprises should establish genAI usage policies and classification and grading norms as soon as possible, identify and block AI traffic with the help of CASB/SASE, prohibit personal LLM access by default for highly sensitive sectors, and introduce enterprise version of controlled LLM as an alternative, with supporting auditing and data minimization strategies.

ChatGPT Introduces Conversational Advertising: New Data Security and Compliance Challenges Behind AI Profit Models

OpenAI has announced that it is testing in-session ads in both the free and Go versions of ChatGPT, a commercialization adjustment that also brings "ad compliance and data security" to the forefront. Officials emphasize that they will not sell conversation data to advertisers, and that ads are not separated from answer logic, but they do not detail the types of data and processing paths used for personalized placement, which is a grey area that security and compliance teams need to focus on. From the technical path, chat advertising requires real-time feature extraction of conversation context, interest signals and user profiles, and then selecting "relevant sponsored content" through recommendation or sorting models, which requires the establishment of minimal collection, desensitization and use limitation controls in the log collection, feature generation, model training and push chain, or else it will easily evolve into "implicit painting". This requires the establishment of minimal collection, desensitization and use limitation controls in the log collection, feature generation, model training and push chain, otherwise it will be very easy to evolve into "implicit profiling + out-of-bounds reuse". For enterprise security teams, on the one hand, it is necessary to include such "conversational AI ads" in the risk assessment of third-party services, to check whether there are cross-regional data flows, regulatory red lines (such as minors, sensitive scene ad blocking strategies) and audit gaps; on the other hand, it is also necessary to reverse the examination of their own internal AI assistants and customer service robots to see whether there is the same "borrowed interaction" and whether there is the same "borrowed interaction". On the other hand, we should also examine our own internal AI assistants and customer service robots to see if there is the same impulse and secret logic of "borrowing interaction to make advertisements/portraits". It is foreseeable that the future of large model product security will expand from purely discussing "model overreach and prompt injection" to "hidden marketing boundaries in human-machine dialogues", and how to design transparent and controllable advertising and data governance mechanisms between sustainable profitability, user trust and regulatory requirements is becoming a key issue for the next generation. How to design a transparent and controllable advertising and data governance mechanism between sustainable profitability, user trust and regulatory requirements is becoming a key proposition for the next round of AI security practices.

Risk Insight: 2026 Global Enterprise Security Focus Shifts to AI Vulnerabilities

Report Core Findings:

WEF (World Economic Forum) conducted a questionnaire survey of 800 enterprise practitioners globally during August-October 2025, and the results were released in January 2026. The survey revealed that 94% of executives identified AI as the most significant driver of change in the cybersecurity landscape in 2026; 87% of respondents ranked AI-related vulnerabilities as the fastest growing cybersecurity risk. Compared to the 2025 survey, the percentage of organizations evaluating the security of AI tools increased dramatically from 371 TP3T to 641 TP3T.From an offensive and defensive perspective, organizations are both concerned about attackers using AI to accelerate the pace of attacks (721 TP3T concern) and are investing in AI defense tools.

Three core pitfalls of AI security:

The Top 3 risks specifically identified by the CSOs are, in order:

(1) Data Breach and Privacy Exposure (30%) - AI model training data is poisoned or sensitive information is extracted during inference;

(2) Adversary AI Capability Enhancement (28%) - Malicious actors use AI to generate phishing emails, adaptive malware, and false opinions;

(3) AI System Technical Security (15%) - AI-specific vulnerabilities such as model backdoors, privilege obfuscation, and prompt injection.

Meanwhile, the 73% enterprise has shifted from ”ransomware defense first” in 2025 to ”AI-powered fraud and phishing defense” in 2026.

AI Intelligentsia Permission Explosion Problem:

CyberArk and other security vendors report a key trend: non-human identities are about to become the number one cloud violation vector. By 2026, every AI intelligence will be an ”identity” - requiring database credentials, cloud service tokens, code repository keys, and more. As organizations deploy dozens or even hundreds of AI intelligences, these identities accumulate exponentially more privileges, making them a target for attackers. oWASP's new ”tool misuse” attack vector is particularly dangerous: an attacker can inject malicious data through a malicious data injector without modifying the AI's command prefix (system prompt). The new "tool misuse" attack vector in OWASP is particularly dangerous: an attacker can trick the AI into making unintended API calls, elevating privileges, or stealing data without modifying the AI's system prompt.

Forward-looking coping strategies:

Implement AI Identity and Access Governance (IAM): Assign minimum required permissions to each AI intelligence and audit its credentials and API call logs on a regular basis

Deploy expression and hint protection: add command injection detection to the input validation layer of the AI agent to quarantine untrusted external data sources

Establishing an AI supply chain trust system: reviewing the security sources of third-party AI models, plug-ins and data sources to prevent backdoor models from being deployed

Extending AI-aware SIEM: Traditional log analysis has struggled to cope with AI's high level of autonomy, requiring dedicated AI behavioral anomaly detection

Forming an AI security emergency response team: because traditional cybersecurity teams lack experience in emergency response to AI-specific threats

Trend Insights:

The year 2026 will be the turning point from ”AI-enabled security defense” to ”AI security governance systematization”. It is no longer simply ”using AI to combat malicious AI”, but to integrate AI risk awareness into the whole process of identity management, privilege management, audit logs, emergency response and so on. Those enterprises that still remain in the ”AI benefit theory” and ignore privilege management will face the biggest price.

AI Cyber Attacks Become a New Trend: 2025 Q4 Attack Sample Prediction Trial 2026 AI Security Risks

Security reports show that in the fourth quarter of 2025 there were multiple cases of cyberattacks utilizing autonomous AI Agents, with attackers significantly expanding their attack surface by automating intelligence gathering, lateral movement, and elevation of privilege through the intelligences. Some analyses point out that some national-level threat actors have already used AI Agents to execute the steps of the 80%-90% attack chain in actual combat, with speed and covertness exceeding that of traditional human hacker teams. Experts predict that, as big models and automation frameworks sink further, "autonomous AI attacks" could evolve into a new mainstream threat in 2026 that is more destructive than traditional ransomware and spear phishing, especially targeting critical infrastructure and cloud environments.

AI Fraud and Data Breaches Will Surge in 2026

AI will be one of the core threats to cybersecurity in 2026, with more than 8,000 data breaches and around 345 million records exposed globally in the first half of 2025 alone, according to Experian's latest forecast. Meanwhile, Experian and Fortune report that AI-driven fraud will continue to skyrocket in 2026, with losses already reaching about $12.5 billion in the previous year, and deep counterfeiting and smart phishing proliferating rapidly on financial, e-commerce and social platforms. According to the reports, AI tools are "democratizing" fraud capabilities, making it possible for low-skill attackers to batch-generate highly realistic text messages, voices and synthetic videos, making it difficult for traditional anti-fraud rules to recognize these new attack patterns in time.

Global Cybersecurity Outlook 2026: AI Has Become the Biggest Risk for Cybersecurity Attack Growth

The Global Cybersecurity Outlook 2026 report states that 87% of organizations surveyed identified AI-related vulnerabilities as the fastest-growing cyber risk since 2025, and that AI is strengthening both the offensive and defensive ends of the spectrum. According to the report, 77% of organizations have adopted AI in their security operations for phishing detection, anomalous intrusion response and user behavior analysis, but data breaches and model misuse emerged as one of the top concerns for executives. The percentage of organizations proactively evaluating the security of AI tools has increased from 37% to 64% compared to 2025, indicating a shift from "blindly embracing AI" to "prioritizing security governance".

BreachForums Dark Web Forums History Database Major Leak

Since 2022, BreachForums has been one of the world's largest and most notorious forums for data breaches and hacking deals. This underground data bazaar is not only a stage for hackers to show off their war chests, but it is also the curation point for many major data breaches and ransom campaigns.BreachForums was originally founded by Conor Fitzpatrick (ID "pompompurin"), and was taken over in 2023 after his arrest by ShinyHunters took over operations. It was later taken offline for MyBB 0day, and some notes were released at the time, the authenticity of which is unknown. In June of this year, France and the United States worked together to arrest several more core members, including ShinyHunters, Hollow, Noct and Depressed.

Core details of the incident

Leak source: allegedly from a member within the original BreachForums.

Leaked content: a zip file named breachforum.7z containing:

Full SQL database file: contains core data such as user registration information, credentials, etc.

User PGP key: may affect the security of encrypted communications.

Statement document: a long, stylized, "poetic" text (.txt), the content of which has been identified as possibly having AI embellishments, or as a leaker's statement.

Authenticity of data: The existing users have verified that the data is authentic and recent by verifying the temporary email address they have used in the document.

Downloaded from: https://shinyhunte[...] rs/breachforum.7z (Note: links have been rendered innocuous for security reasons, please do not access them directly).

Leakage data analysis (email domain ranking)

The statistical ranking of registered email addresses in the leaked data is as follows, clearly reflecting the preferences of the forum's user base, with a very high percentage of private and temporary email services:

Rank Mailbox Domain Name Number of Occurrences Service Type/Characteristics

1 gmail.com 239,747 Mainstream commercial mailboxes

2 proton.me 29,851 End-to-end encrypted privacy mailboxes

3 protonmail.com 12,382 End-to-end encrypted privacy mailboxes

4 onionmail.org 4,668 Anonymous encrypted mailboxes specializing in the Tor network

5 cock.li 4,577 Email hosting service emphasizing anonymity without personal verification

6 yahoo.com 4,478 Mainstream Business Email

7 qq.com 3,290 Mainstream commercial mailboxes

8 mozmail.com 2,395 Privacy forwarding mailbox provided by Firefox Relay

9 tutanota.com / tutamail.com 2,294 End-to-end encrypted privacy mailboxes

10 dnmx.org 1,441 Anonymous mail service

Data Analysis Interpretation:

High concentration of privacy services: more than half of the top 10 domains (Proton, OnionMail, Cock.li, Mozilla Relay, Tuta) are privacy-protecting services focused on anonymization, encryption or forwarding. This shows that BreachForums users are extremely anti-retroactivity and privacy-conscious.

"Room to Operate" Warning: When users refer to "high room to operate", they may be referring to the fact that an attacker can exploit the registration mechanism of these private mailboxes (e.g., without requiring cell phone number verification) to conduct correlation analysis, phishing, or launching targeted attacks against users of specific privacy services.