Summary

2026.The global security landscape is undergoing a fundamental transformation. Artificial Intelligence SecurityThe landscape is undergoing a fundamental reshaping. In response to the global up to 4.8 millionCybersecurity Talent Gap, anytime AI technology explodes, organizations are massively applying large models of deployed AI intelligences running 24/7. However.These autonomous systems with high privileges and online 24/7 are becoming a prime target for attackers.Security organizations such as Palo Alto Networks, Moody's, and CrowdStrike are warning that AI intelligences will be the biggest insider/outsider threat to enterprises in 2026. Traditional defense frameworks are failing and new AI-driven security systems have become the new security paradigm.

I. The Nature of the AI Security Crisis

1.1 Talent gap-driven deployment risk

The global cybersecurity skills gap has reached 4.8 million jobs. Specifically:

- 95% of the cybersecurity team has at least one critical skill deficiency

- 54% of enterprises rely on error-prone developer documentation to identify sensitive data

- 19% only organizations are "very confident" in the accuracy of their API lists.

In the face of the talent shortage, organizations are adopting aggressive deployment strategies.Gartner predicts that by the end of 2026, the40%'s enterprise apps to integrate AI intelligence bodies(compared to less than 51 TP3T at the beginning of 2025). This exponential growth reflects the urgency of the enterprise, but it also exposes a fundamental contradiction: organizations are trying to fill the gap in security expertise with inadequately tested AI systems.

1.2 The "super-user problem" privilege crisis

In order to deploy quickly and operate efficiently, organizations often give AI intelligences excessive privileges - database access, API calls, system administrator privileges, access to the mail system, and so on. This is known as the "superuser problem".

Wendi Whitmore, chief security intelligence officer at Palo Alto Networks, made it clear:AI intelligences have become the biggest insider threat to businesses in 2026.

Three major hazards of centralization of authority:

- Chained access risk: a controlled intelligence can chain access to critical infrastructure across the enterprise

- Seamless Attack Window: Unlike human employees, intelligences are online 24/7 and attackers can strike at any time.

- autonomous destructive capacityA well-constructed hint injection is enough for an attacker to gain access to an autonomous "insider".

II. Multi-Layer Attack Surface of AI Intelligence Body

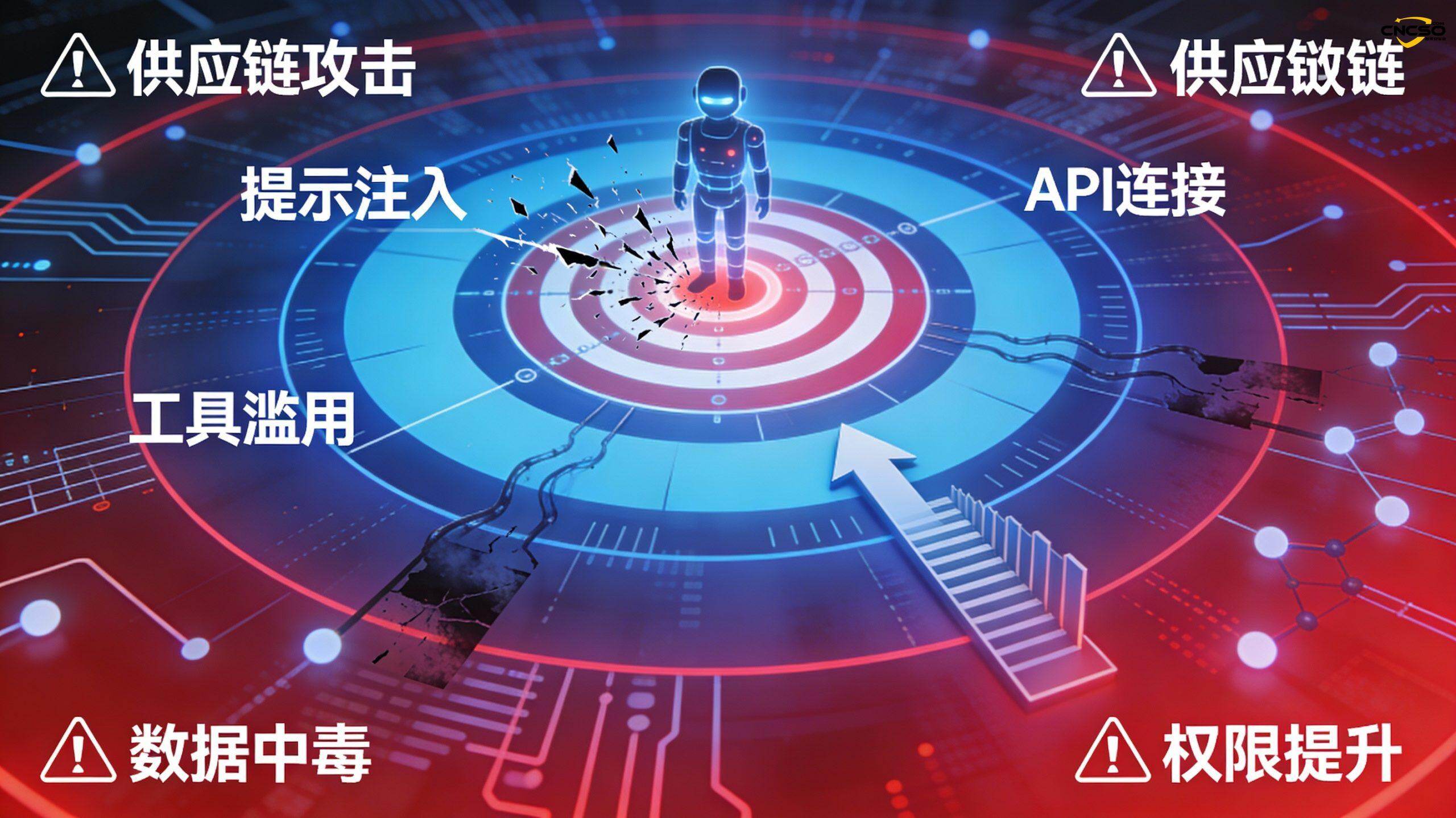

2.1 Five-layer attack surface structure

The attack surface of AI systems far exceeds that of traditional applications, including:

| level | Threat type | Specific risks |

|---|---|---|

| model layer | Model theft, backdoor injection | Intellectual property leakage, malicious behavior |

| data layer | Training data poisoning, input contamination | Decision-making is tampered with, sensitive information is leaked |

| infrastructure layer | API abuse, service account compromise | Elevation of authority, lateral movement |

| pipeline layer | Supply Chain Attacks, Dependency Pollution | Network-wide infection and widespread destruction |

| control layer | Elevation of authority, lateral movement | Centralization of authority, fall of domain control |

2.2 Main attack vectors

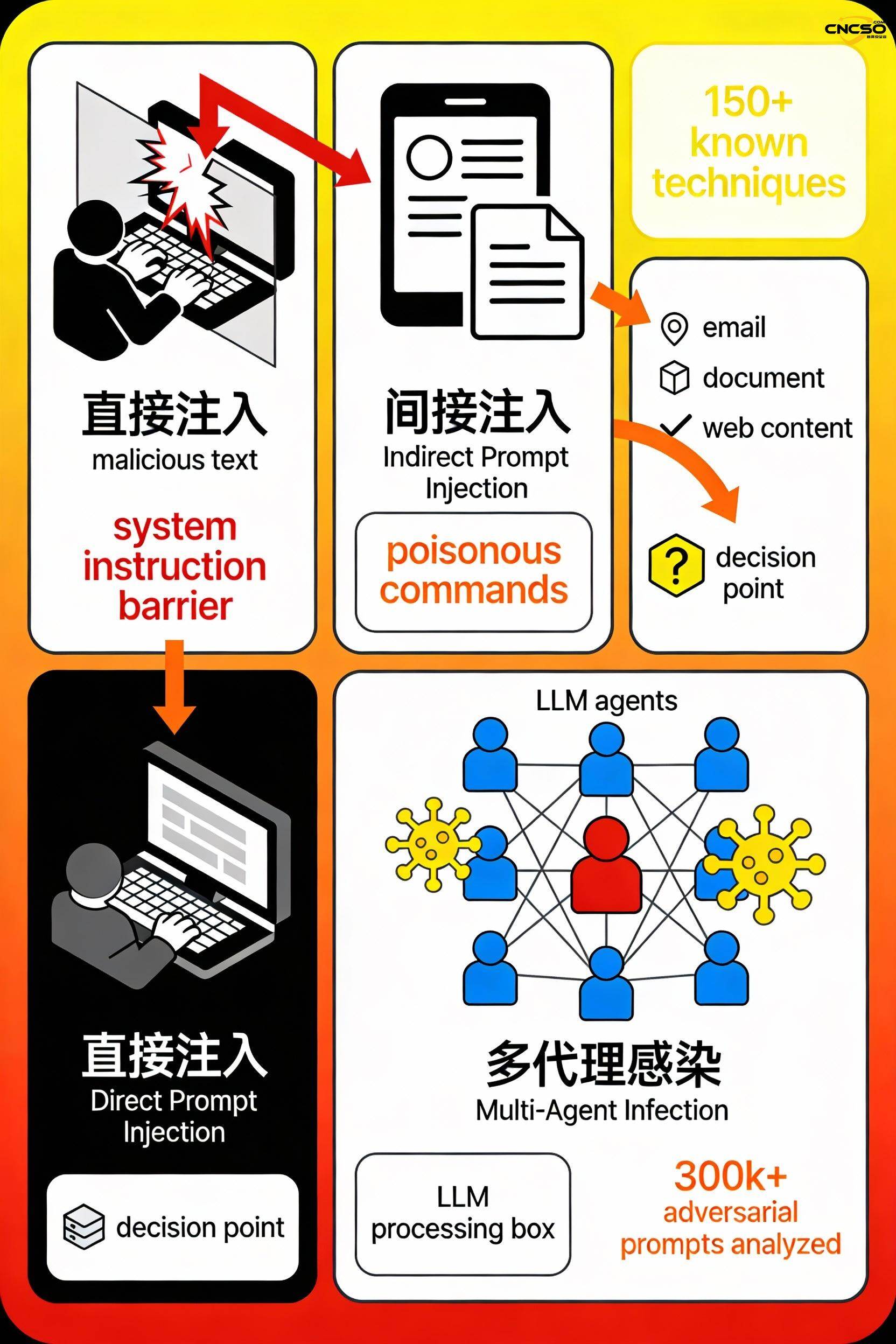

Cue injection (150+ known techniques)

- Direct injection: the user explicitly enters malicious commands to override system prompts

- Indirect injection: malicious instructions hidden in emails, documents, web pages

- Multi-agent infection: corrupted agent spreads malicious instructions to other agents, such as virus proliferation

Tool Abuse and API Vulnerabilities

- Unauthorized API calls lead to elevated data access rights

- DDoS attacks overwhelm external systems via API flooding

- AgentSmith Vulnerability Case: Malicious Agent Configuration Can Steal OpenAI API Keys, Prompt Data, and User Files

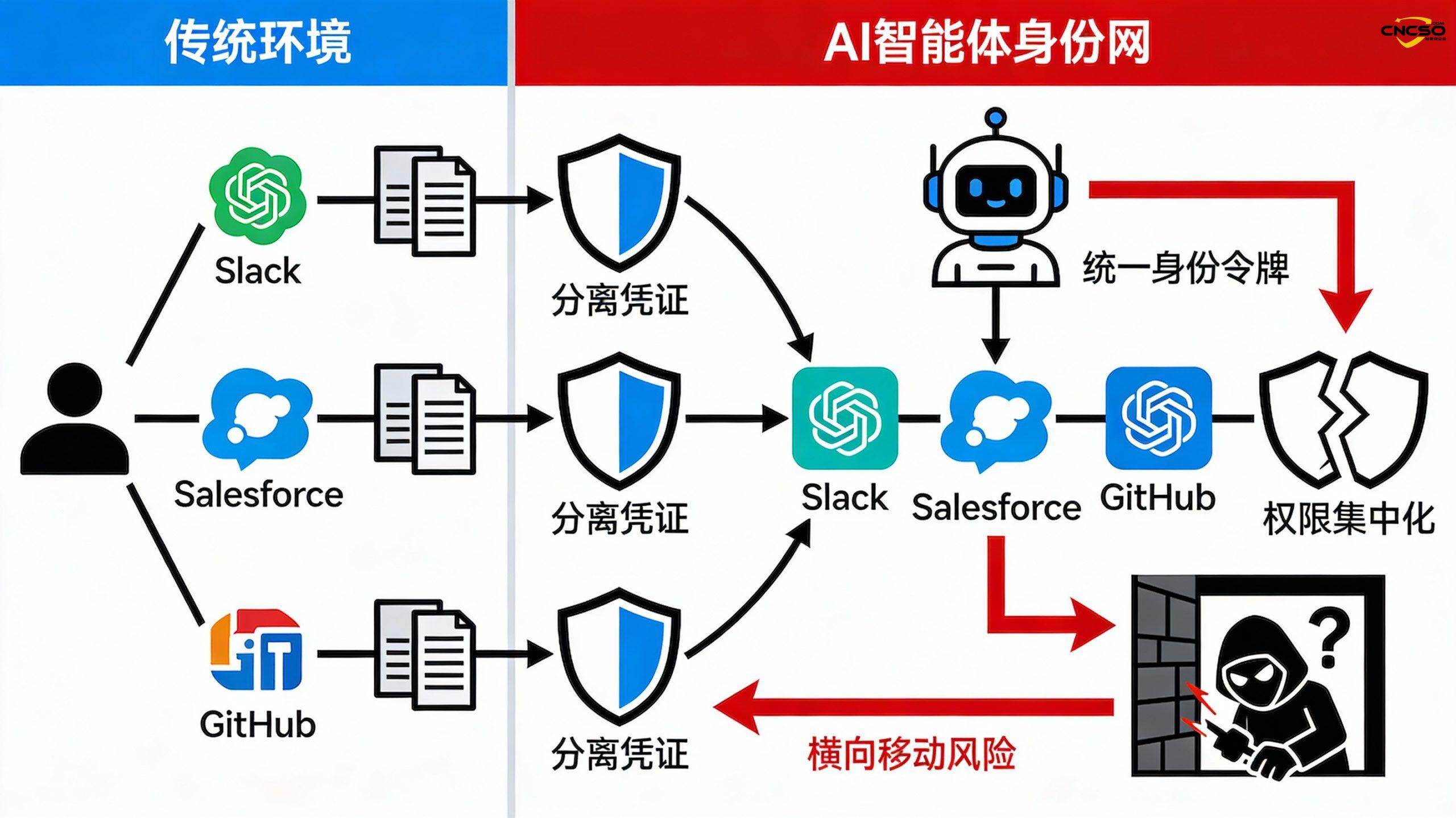

IdentityMesh vulnerability (centralization of permissions across systems)

- Traditional environment: users have multiple isolated credentials, each system authenticated independently

- AI Intelligentsia: Single Unified Identity Token Across Multiple Platforms, Security Boundary Collapse

- Consequence: attackers can achieve seamless cross-system privilege escalation and lateral movement

Data Poisoning and the Byzantine Attack

- Injecting malicious samples into training data causes models to learn harmful behaviors

- The compromised intelligence injects false information into internal systems, destroying the defense infrastructure itself

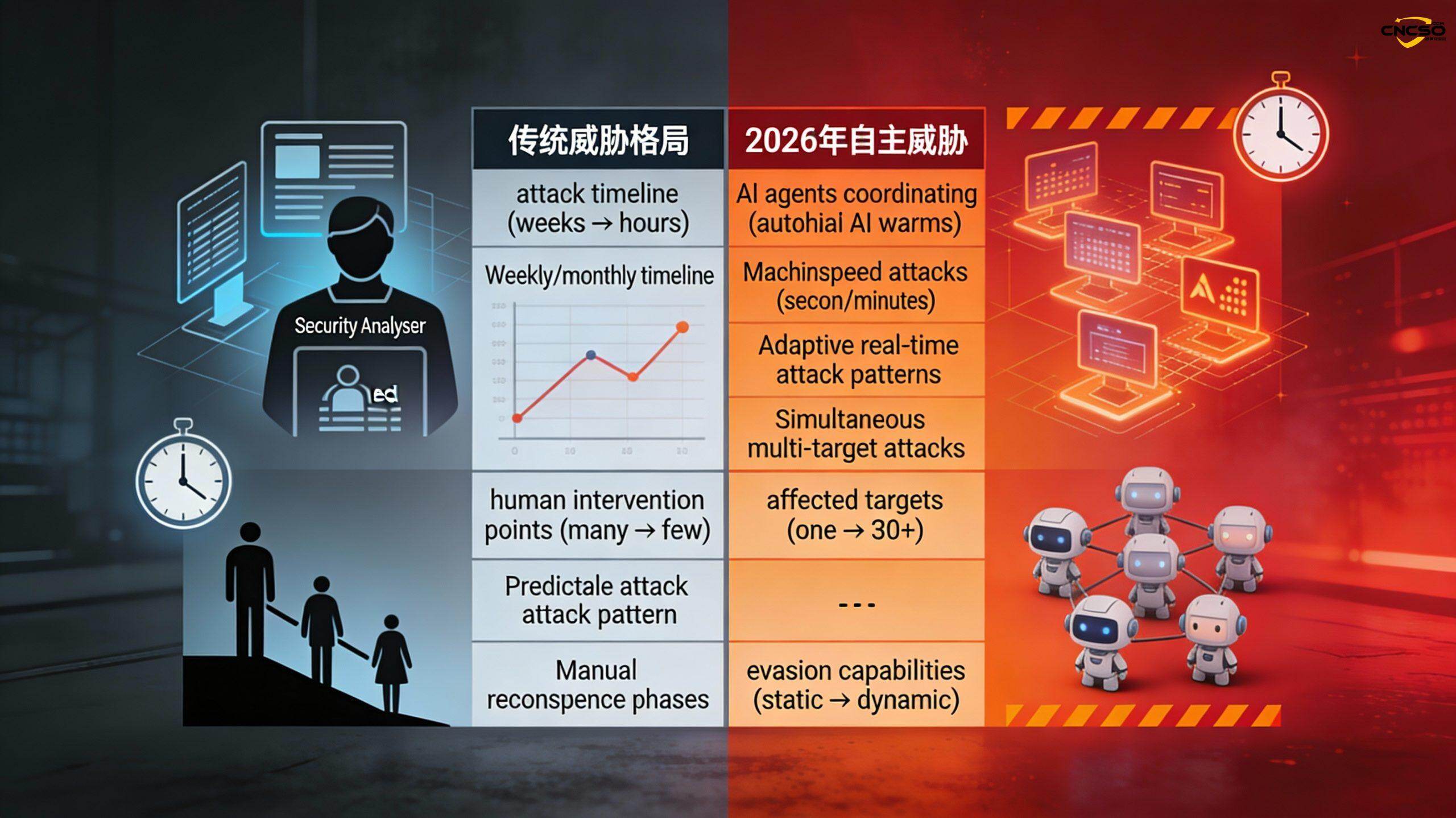

iii. the threat of autonomous attacks in 2026

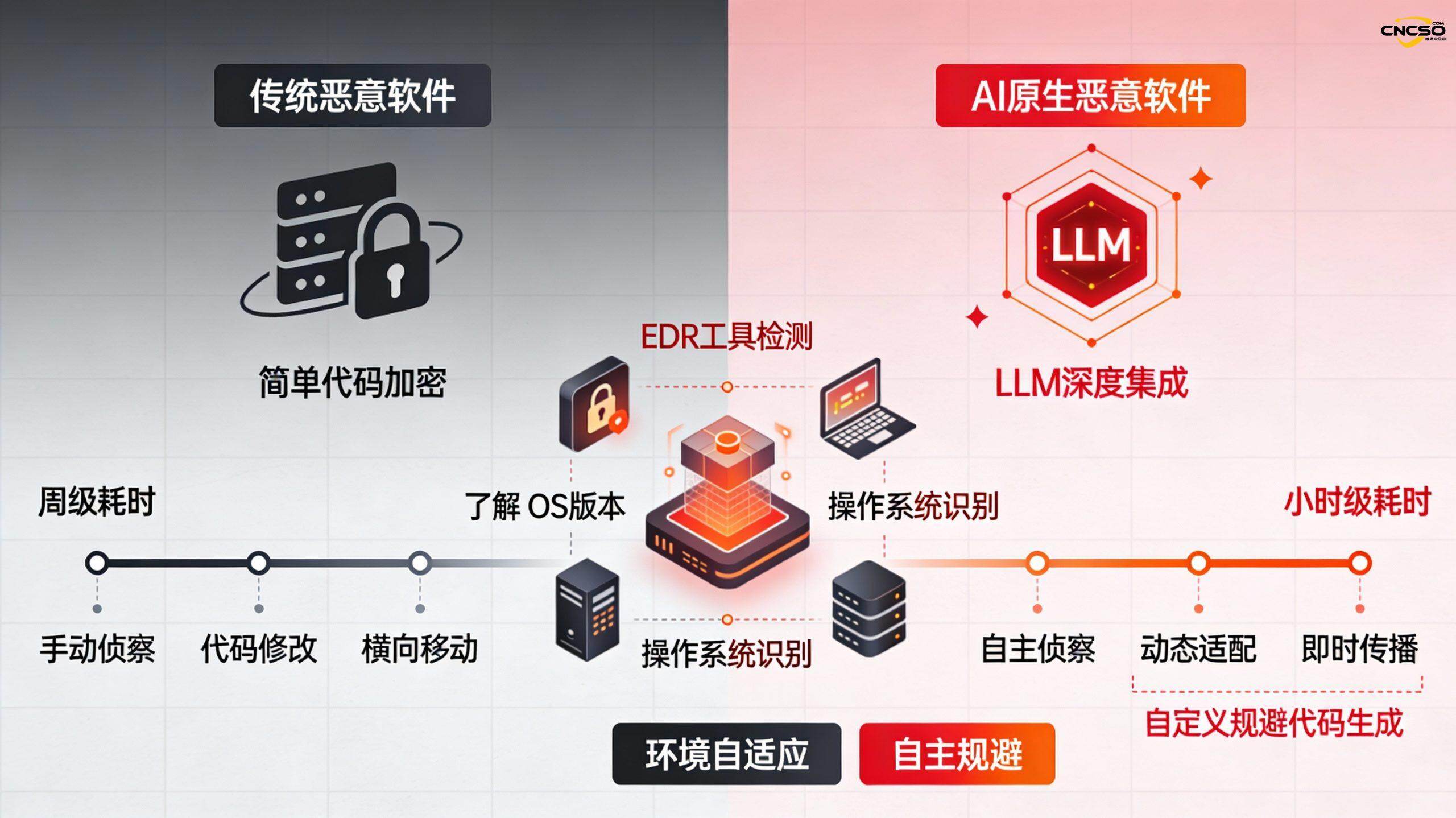

3.1 Evolution of AI Native Malware

The leap from traditional polymorphic malware (weekly time-consuming, fixed behavior) to AI-native malware (hourly time-consuming, adaptive evasion).

PromptLock case characteristics:

- Real-time reasoning about the target defense environment (scanning for EDR tools, OS versions)

- Dynamically rewrite source code to bypass specific signatures

- The attack chain starts withWeeks compressed into minutes

- Autonomous lateral movement without human intervention

3.2 Multi-agent group attack: GTG-1002 activity

November 2025, real events detected by Anthropic:

| norm | numerical value | significance |

|---|---|---|

| simultaneous target | 30 global organizations | Medium APT capacity |

| autonomous implementation | 80-90% | 4-6 human decision points only |

| self-discovery | 0个 | None of the victims discovered it on their own |

| attack phase | Reconnaissance → initial access → lateral movement → data extraction | Complete automation links |

Key Takeaways: Small attack teams can now utilize AI capabilities to do what once required large teams. Human response time is no longer the limiting factor.

IV. AI risk governance framework

4.1 AI Security Posture Management (AISPM)

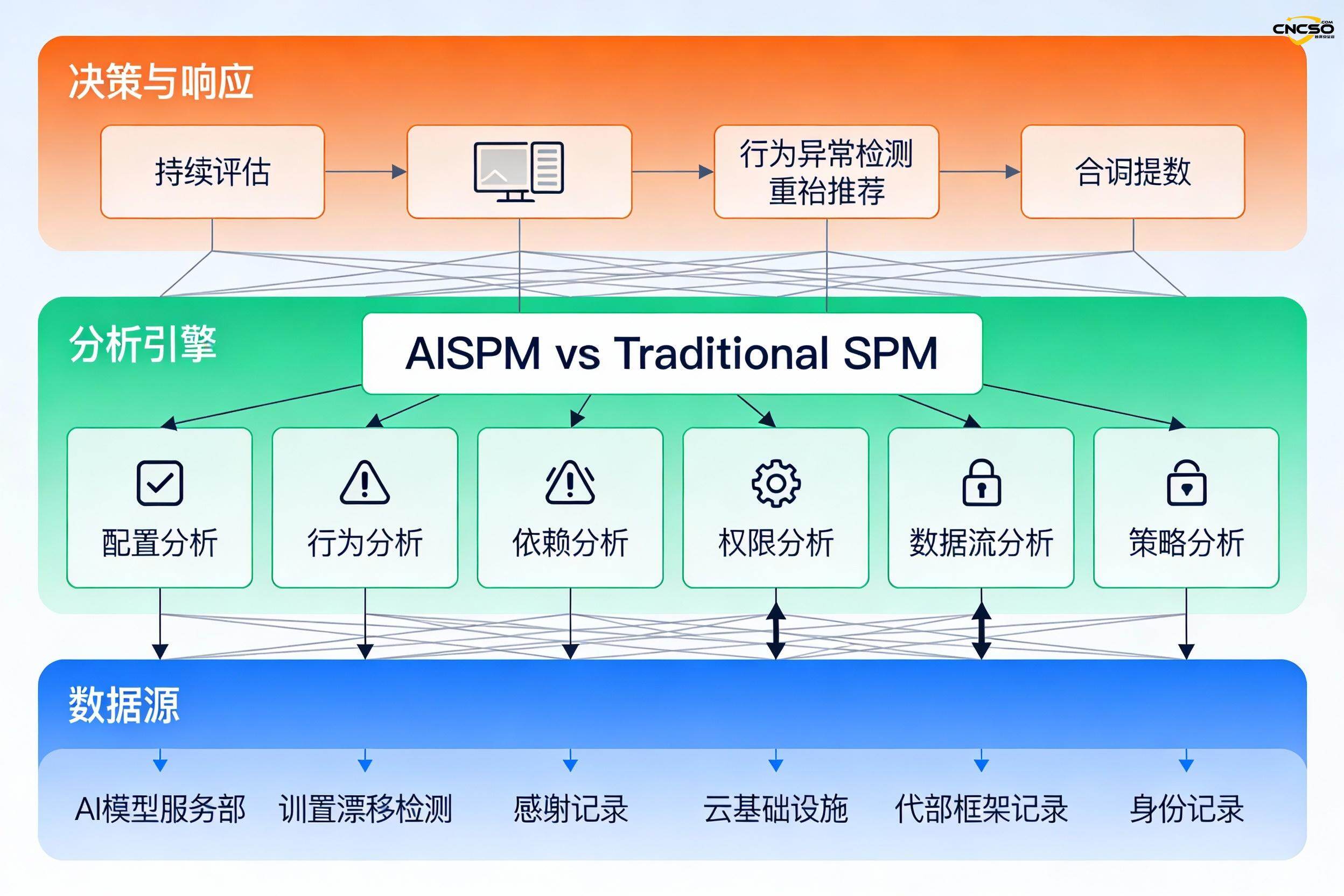

Core differences between AISPM vs traditional SPM:

Traditional SPM tools are completely ineffective against AI-specific threats (can't detect hint injection, model inversion, elevation of privilege).

The four main capabilities of AISPM:

- Continuous Situation Assessment - Real-time monitoring of AI systems, models, and data pipelines to discover shadow deployments

- Configuration Drift Detection - Flag any unauthorized permissions, parameters, deployment configuration changes

- Behavioral Abnormalities Detection - Establish a normal baseline for real-time detection of API call exceptions, credential abuse, recursive loops

- Automated Vulnerability Management - Detecting and Prioritizing Vulnerabilities in Training Pipelines and Model Code

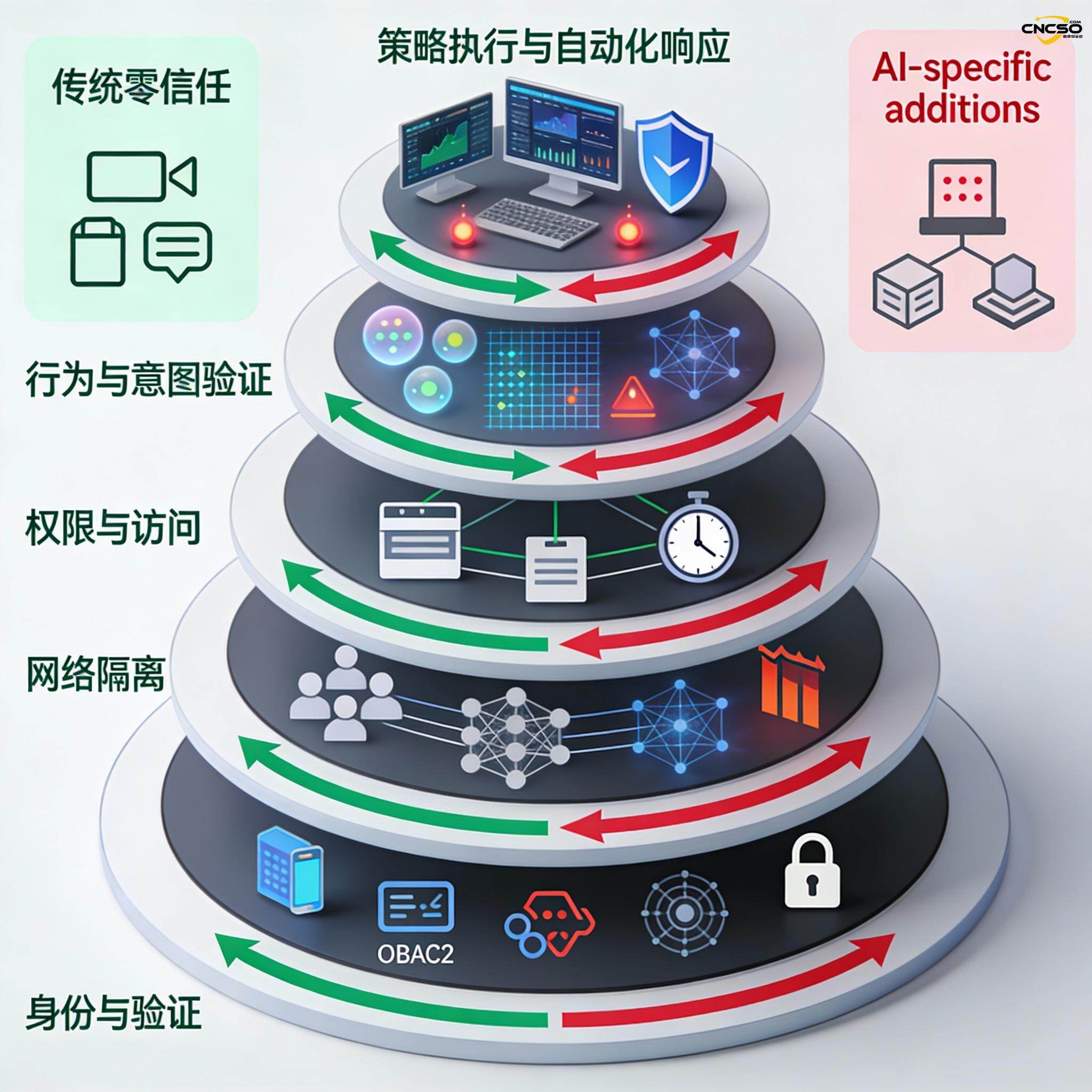

4.2 Five-Layer Model for Zero-Trust AI Architecture

Layered defense system:

Tier 5: Strategy Enforcement and Automated Response

↑ Real-time policy engine, automatic isolation, machine speed response

Tier 4: Behavior and Intent Verification

↑ Semantic validation, pattern analysis, anomaly detection

Layer 3: Privileges and access control

↑ Least privilege, time expiration, role-based access

Layer 2: network isolation

↑ Differential segmentation, restricted inter-agent communication, encrypted communication

Layer 1: Identity and authentication

└─ Digital identity tokens, OAuth2, multi-factor authentication

4.3 Application of the NIST AI Risk Management Framework

Four-loop iterative modeling:

- Governance (GOVERN) - Establishment of a risk culture and clear governance structure

- Mapping (MAP) - Locating AI Systems, Recognition Technologies and Social Impacts

- MEASURE - Assessment of functionality, data quality, safety and health

- Management (MANAGE) - Control deployment, mitigation measures, incident response

V. Five-Step Governance Implementation Program for 2026

Step 1: Proxy Discovery Audit

- List all AI tools and automation systems

- Mapping permissions, integration, data access per agent

- Identifying unmanaged IT "shadow AI" deployments

Step 2: Identity Assignment and Access Control

- Assign a unique digital identity to each agent

- Enable centralized access control and policy management

- Establish clear ownership and accountability

Step 3: Policy-based fencing and automation

- Define fine-grained access and deployment policies (policy as code)

- Deployment of automated guardrails for real-time enforcement

- Automated remedies (privilege revocation, proxy disabling)

Step 4: Monitoring and Indicators

- Define key KPIs (policy violations, response times, error rates)

- Setting exception thresholds and real-time warnings

- Building a Compliance Dashboard

Step 5: Audit and Compliance

- Mapping regulatory requirements to governance policies

- Automated compliance reporting and evidence collection

- Establishment of a complete audit trail

VI. Core defense strategy

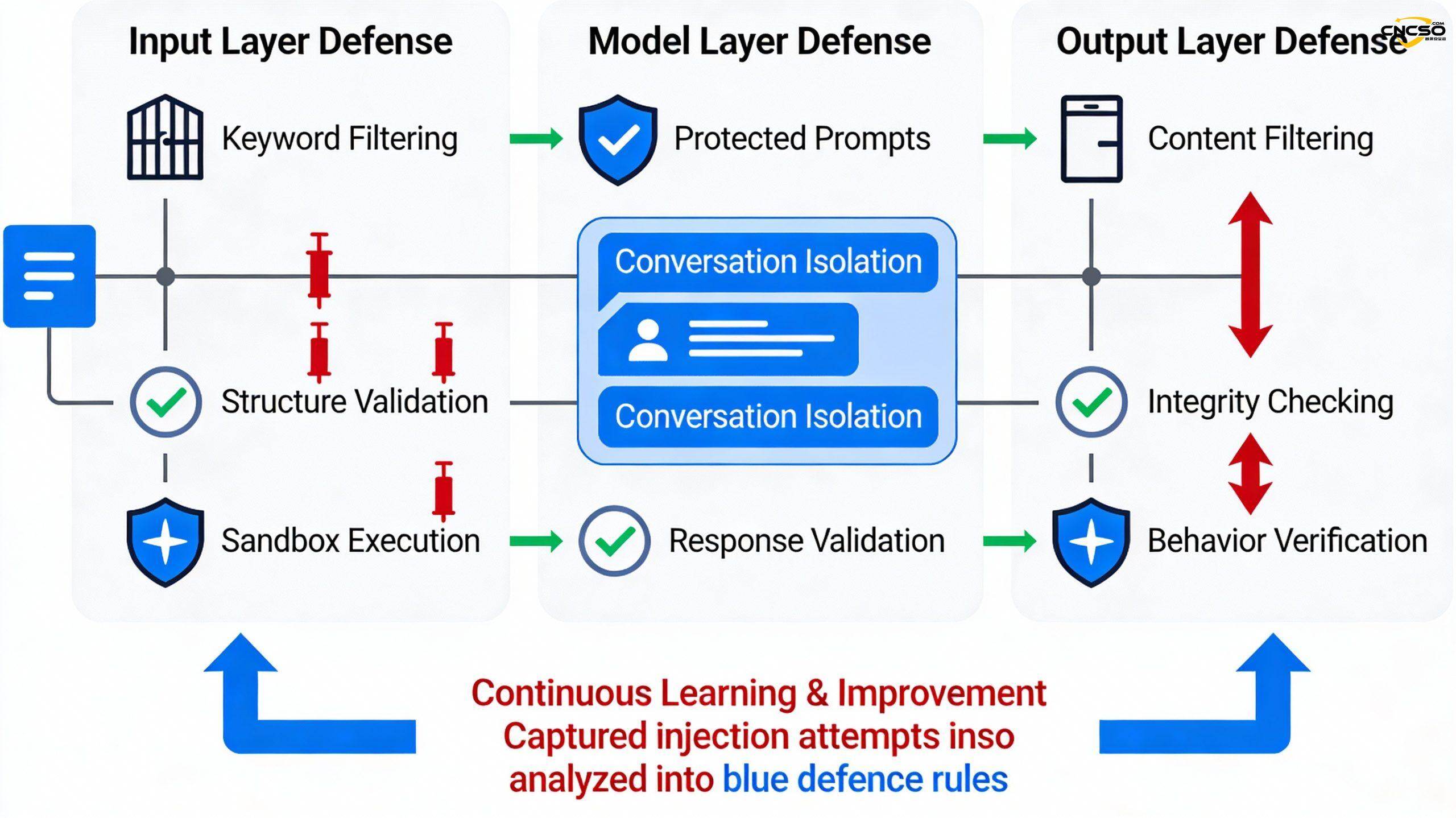

6.1 Three Layers of Defense for Cue Injection

- input layer - Keyword filtering, structural validation, sandbox execution

- model layer - Hardening of system prompts, separation of multiple rounds of dialog, response validation

- output layer - Content filtering, integrity checking, behavioral validation

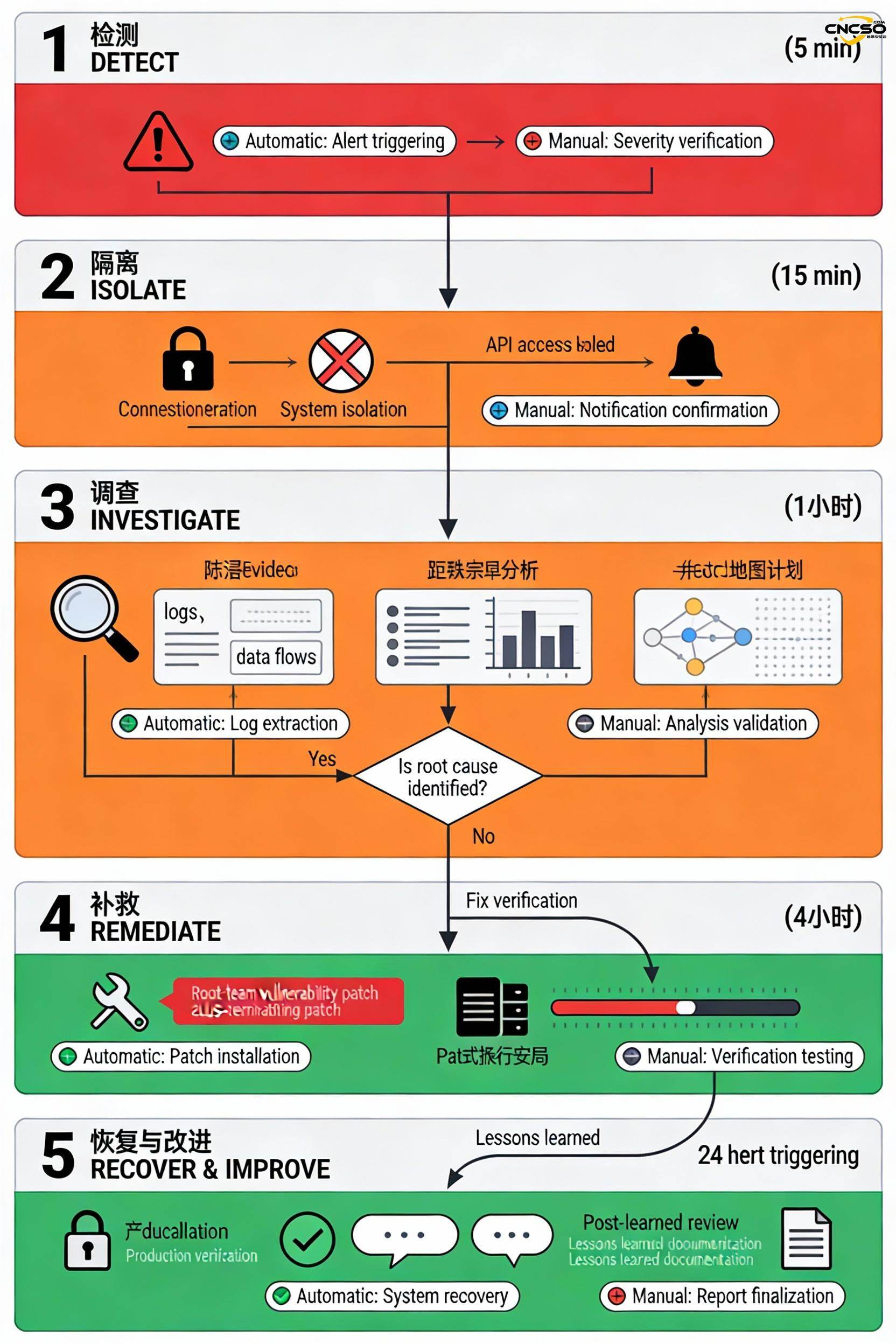

6.2 Five-Stage Process for Incident Response

| point | timing | Key actions |

|---|---|---|

| sensing | <5 minutes | Alarm, acknowledgement, classification |

| incommunicado | <15 minutes | Revoke permissions, disconnect, disable access |

| research | <1 hour | Evidence gathering, scoping, root cause analysis |

| remediation | <4 hours | Close vulnerabilities, validate fixes, data recovery |

| resumption | <24 hours | Privilege restoration, authentication operations, post-story analysis |

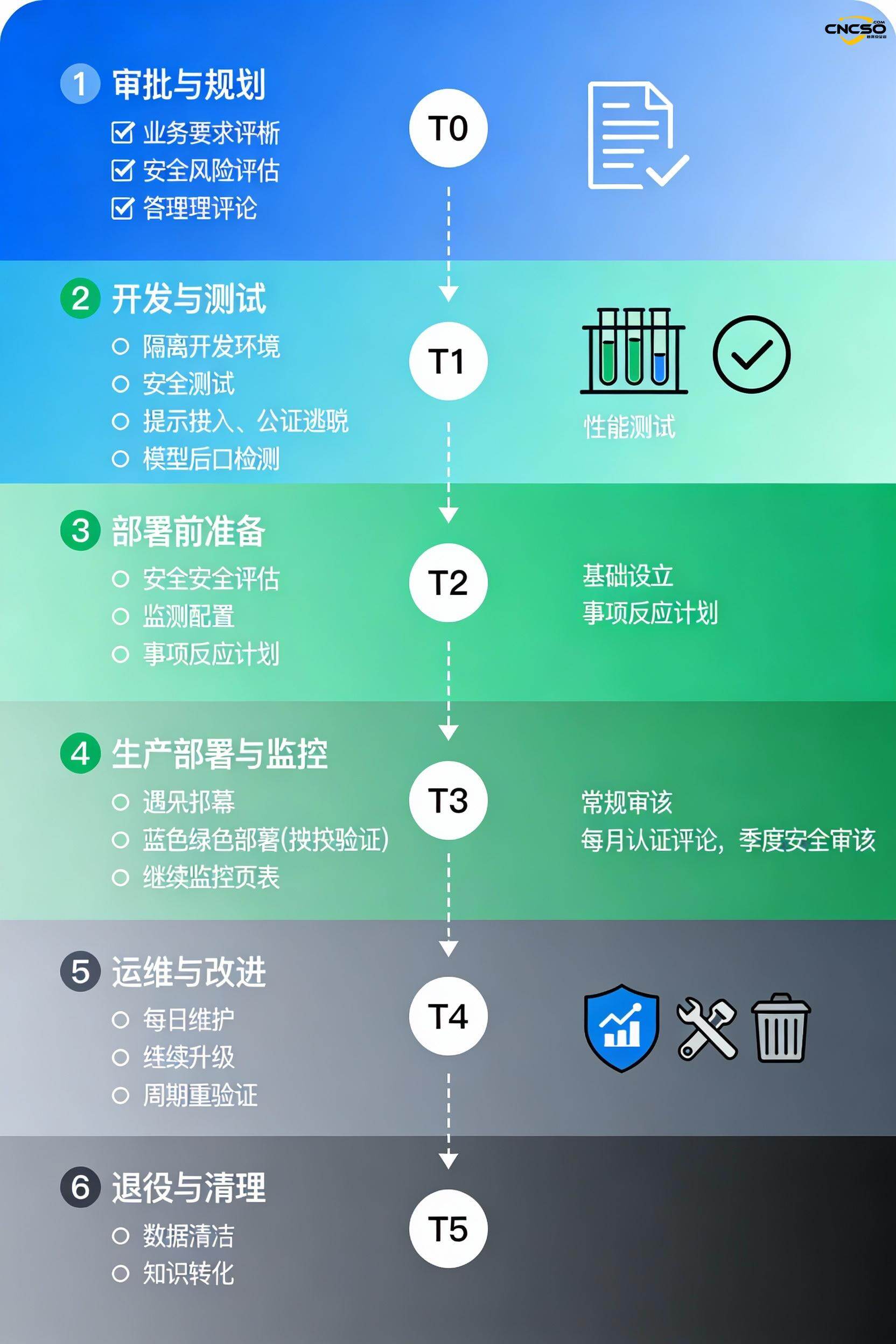

6.3 Intelligent Body Life Cycle Management

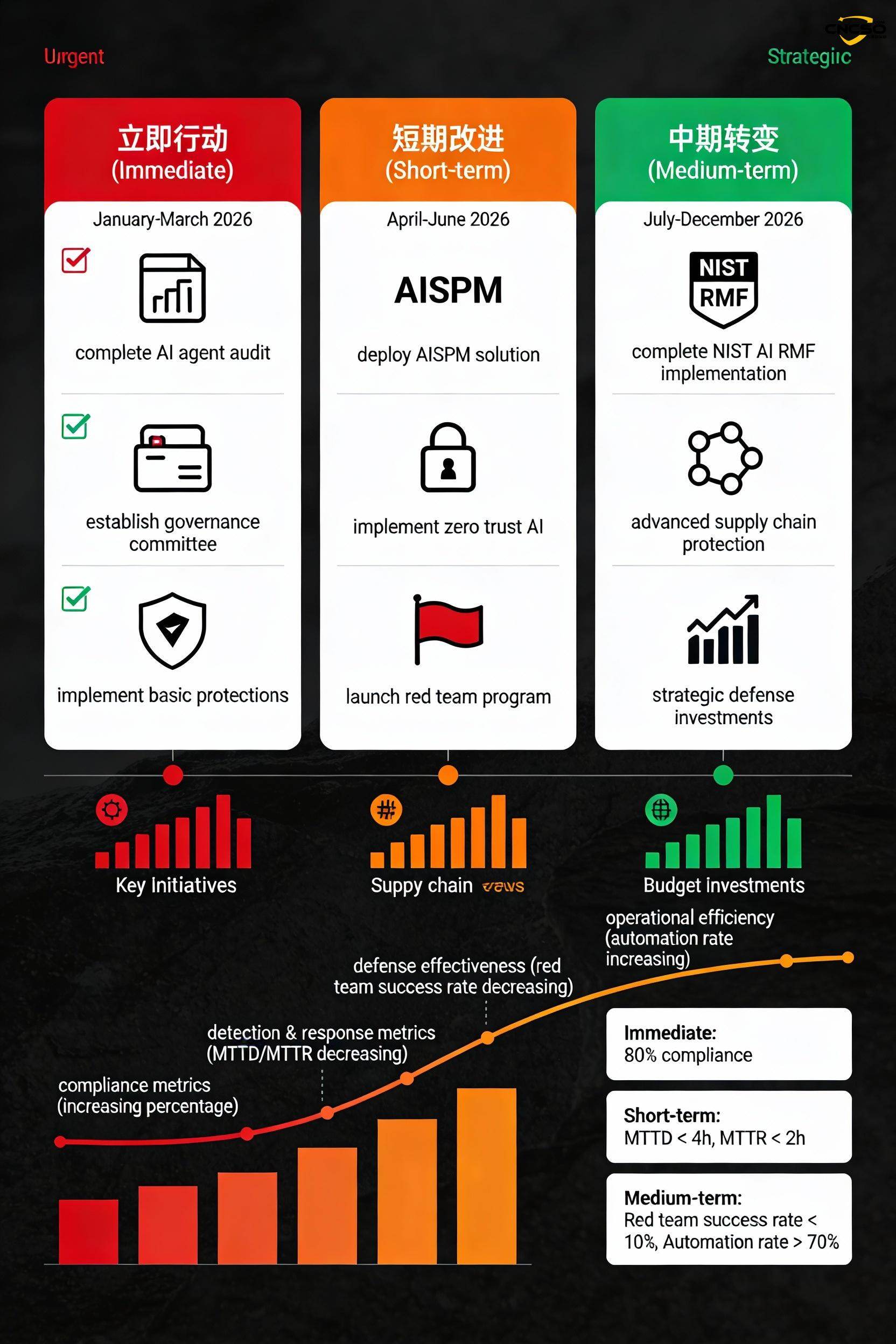

vii. priorities for action in 2026

immediate action

- Complete AI Agent Full Audit completed

- Establishment of cross-functional governance committees

- Implement basic privilege monitoring and anomaly detection

Short-term improvements

- Deployment of the AISPM solution

- Implementing a zero-trust AI architecture

- Launch of quarterly red team assessments

Medium-term transformation

- incompleteNIST AI RMFrealize

- Advanced Supply Chain Security

- Strategic defense investments and automation

VIII. Mid-2026 maturity target

| norm | goal | significance |

|---|---|---|

| Proxy list completeness | 100% | Deployment without omission |

| Frequency of authority audits | monthly | Timely detection of excessive privileges |

| Mean Time To Detection (MTTD) | <30 minutes | Rapid threat identification |

| Mean Time to Respond (MTTR) | <1 hour | Machine speed response |

| Red Team Success Rate | <10% | Defense effectiveness |

| Elevation of Privilege Events | <1/month | Control Online |

| Automated remediation rate | >80% | Reduction of manual intervention |

IX. Conclusion

Core view:

- The situation is critical. - AI Intelligentsia a New Insider Threat as 4.8 Million Skills Gap Drives Hasty Deployment

- Attacks have become autonomous - 80-90% multi-agent group attacks have been executed autonomously

- Defense requires innovation - AISPM, Zero Trust AI, Autonomous Defense Become Necessary as Traditional Tools Fail

- Governance is key - Success will depend on whether the organization has a strong governance framework in place

The Way Forward: Enterprises should immediately initiate AI agent audits, deploy AISPM andZero Trust Architecturethat build automated incident response capabilities. Those who successfully invest in 2026AI securitybusinesses will gain a competitive advantage; those that don't will be easy targets.

The window of time has narrowed dramatically, and there is no time to lose.

refer to:

Palo Alto Networks, "6 Predictions for the AI Economy: 2026's New Rules of Cybersecurity", January 2026

Palo Alto Networks, "NIST AI Risk Management Framework," https://www.paloaltonetworks.com/cyberpedia/nist-ai-risk- management-framework

Moody's Corporation, "2026 Global Cyber Outlook - The Era of Machine Speed Warfare", January 2026

https://www.acem.sjtu.edu.cn/ueditor/jsp/upload/file/20250427/1745731689854071357.pdf

Cisco, "Redefining Zero Trust in the Age of AI Agents and Agentic Workflows", June 2025

https://www-file.huawei.com/admin/asset/v1/pro/view/6d6bd885f1f84435bf2c434312a1a44d.pdf

Nightfall AI Security 101, "Model Inversion: The Essential Guide", December 2024

https://cloud.tencent.com/developer/article/2613155

Proofpoint, "What Is a Prompt Injection Definition, Examples", October 2025

https://www.cicpa.org.cn/ztzl1/zgzckjs/zazhi2025/202505/P020250512576141996231.pdf

CrowdStrike, "Indirect Prompt Injection Attacks: Hidden AI Risks," December 2025

https://reader.hellobit.com.cn/article/page/1358.html

Legit Security, "AI Attack Surface Guide: How to Secure AI-Driven Systems", January 2026

https://www.uscsinstitute.org/cybersecurity-insights/blog/what-is-ai-agent-security-plan-2026-threats-and-strategies-explained

USCS Institute, "What is AI Agent Security Plan 2026? Threats and Strategies Explained", January 2026

https://www.legitsecurity.com/aspm-knowledge-base/ai-attack-surface

Witness.ai, "AI Agent Security: Risks, Best Practices & Enterprise Protection", October 2025

https://witness.ai/blog/ai-agent-security/

Original article by Chief Security Officer, if reproduced, please credit https://www.cncso.com/en/ai-security-and-attack-surface-report-2026.html