I. Preface

2025.AI(AI) has moved from the lab to large-scale applications, while also profoundly changing the offensive and defensive landscape of cybersecurity. According to authorities such as the Verizon Data Breach Investigations Report (DBIR), IBM's annual Cost of a Data Breach Report, CrowdStrike Ransomware Investigation, and Microsoft's Digital Defense Report, the current year has witnessed an explosion of AI-driven cyberattacks. The 47% increase in AI-driven cyberattacks reported globally was accompanied by a rapid evolution in defense technologies, with organizations using AI defenses saving an average of $1.9 million in breach costs compared to their peers who don't employ these technologies.

This report provides a comprehensive analysis of the global and Hong Kong cyber threat landscape in 2025, with insights into how AI is reshaping the cost analysis of attackers and defenders, the most affected industries and regions, real-life case insights, the economic impact of security breaches, and key defensive actions organizations should take. The report is designed to provide decision makers with data-driven insights to help organizations make informed security investment decisions in an increasingly complex threat landscape.

II. Threat posture in 2025

2.1 Global Cyber Threat Overview

The 2025 cyber threat landscape is characterized by significant acceleration and escalation. According to the data, the average number of cyberattacks experienced by organizations per week has doubled over the past four years, from 818 in the second quarter of 2021 to 1,984 during the same period in 2025, an increase of 58% in two years.This accelerating trend is a direct reflection of the widespread use of AI technologies in the attack chain.

Key Statistics:

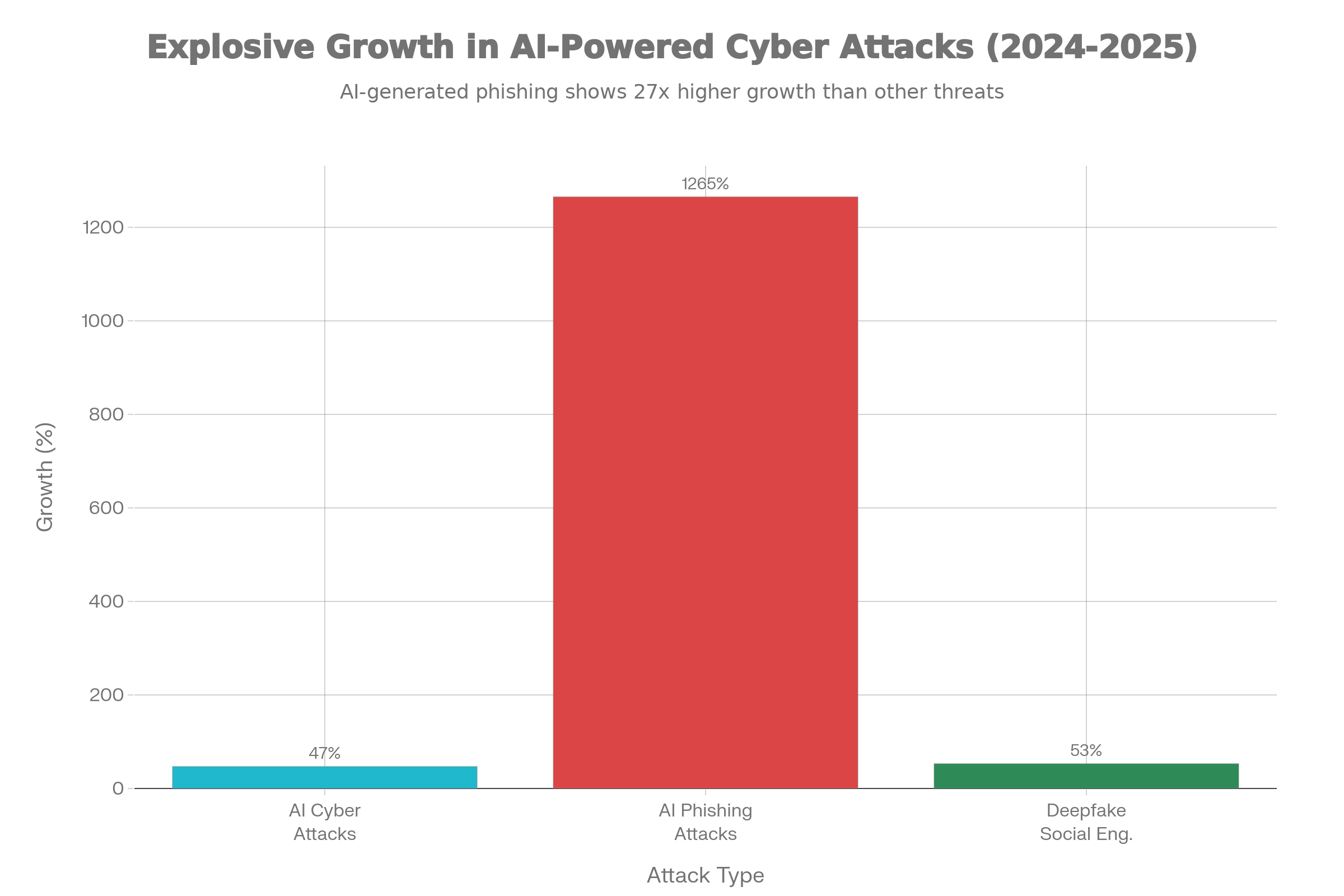

| norm | 2024 | 2025 | growth rate |

|---|---|---|---|

| AI-driven cyberattacks | cardinal number | Add 47% | 47% |

| AI-generated phishing attacks | cardinal number | Increase of 1,265% | 1,265% |

| Deep Fake Social Engineering | cardinal number | Add 53% | 53% |

| Average number of attacks per week | 1,260 | 1,984 | 58% |

| Security Leaders Expect Daily AI Attacks | cardinal number | 93% Report | - |

AI-driven cyberattacks have become a global threat. The estimated global losses amount to $30 billion. In these attacks, AI is used to increase the credibility of phishing emails, generate deep forgeries to perform identity impersonation, automate intrusion steps, and detect exposed AI infrastructure.

2.2 AI-driven specific attacks

Industrializing AI for phishing emails

In 2025, 82.61 TP3T of phishing emails use AI technology. These emails are no longer simple spam, but highly personalized social engineering attacks. According to the data, phishing emails using AI technology are written 401 TP3T faster and are able to:

-

Matching brand and personal tone of voice

-

Integrate authentic details from LinkedIn, historical emails and public records

-

Create fully grammatical messages without traditional spelling errors

-

Cite the project and invoice number in which the victim was actually involved

This results in email open rates of up to 781 TP3T and malicious link clicks of up to 211 TP3T.Of particular note is the increase in the proportion of AI-generated phishing emails from approximately 401 TP3T to 831 TP3T compared to 2024.

Deep Fake and Sound Cloning

Deep forgery-driven fraud attacks spiked by 2,1371 TP3T and now account for 6.51 TP3T of all fraud attacks. in 2025, AI-driven deep forgery caused a 531 TP3T increase in social engineering incidents, and a 2,331 TP3T increase in social engineering and fraudulent claims. generative AI was used:

-

Create realistic video and audio to impersonate executives

-

Identity impersonation through text synthesis techniques

-

Multi-channel fraud combining multiple media types

AI Acceleration for Ransomware

CrowdStrike's 2025 Ransomware Survey reveals that 76% of global organizations can't match the speed and sophistication of AI-driven attacks.48% of organizations see AI-automated attack chains as the biggest threat.85% report that traditional detection methods are becoming obsolete for AI-enhanced attacks.AI is used:

-

Automate intrusion steps to accelerate time from initial access to encryption

-

Optimizing ransomware payloads to evade detection

-

Automate data extraction and sales processes

-

Personalized blackmail demands and negotiation tactics

2.3 Massive Exposure of AI Infrastructure

A mid-2025 Trend Micro scan shows that serious misconfigurations in AI applications are creating direct attack paths. These are not zero-day vulnerability issues, but underlying security hygiene deficiencies:

-

Over 200 fully unprotected Chroma servers(Vector database) Data can be read, written or deleted by anyone with network access.

-

Over 10,000 Ollama serversExposure to the Internet (increased since 3,000 in November 2024)

-

2,000 Redis serversno authentication

-

MCP (Model Context Protocol) Server for 72%Exposure to at least one sensitizing capacity

-

50% proxy connection to 3 or more MCP servers"High risk" status

These exposures allow attackers:

-

Stealing embedded and confidential documents

-

Compromising AI models through data poisoning

-

Perform vector drift and hint injection attacks

-

Moving laterally in the environment

Third, a cost-benefit analysis of how AI can reshape attackers

3.1 Mechanisms for AI to Reduce Attack Costs and Increase Scale

Analyzed from an economic perspective, AI is fundamentally changing the business model of cybercrime. While traditional cybercrime requires a lot of manual labor, AI technology enables attackers to automate on a large scale at a very low marginal cost.

Changes in cost structure:

| attack phase | Cost of traditional methods | AI-assisted costs | Efficiency gains |

|---|---|---|---|

| Phishing Email Writing | High (research needed) | Low (automated) | 40% Acceleration |

| target identification | moderate | Low (data aggregation) | simplify significantly |

| social engineering | moderate | Low (depth falsification) | Credibility +53% |

| Payload customization | your (honorific) | lower (one's head) | automatic generation |

| Detection circumvention | your (honorific) | lower (one's head) | Polymorphic Malware +76% |

Specific economics indicators:

What would have taken an attacker hours to research and compose a personalized phishing email can now be done in minutes with generative AI, which translates to reducing the initial cost of the attack by more than 801 TP3T. At the same time, the success rate of AI-generated emails has increased from 5-101 TP3T to 15-201 TP3T with traditional methods, which means that the attacker's ROI has increased dramatically.

3.2 Economic costs of attacking scale

The most important economic benefit of AI is scale. While traditional targeted attacks may target specific organizations, AI-generated attacks can target millions of targets simultaneously. Based on the data, generative AI tools and automation enable attackers to create phishing emails 40% faster than manual methods.

In the Business Email Compromise (BEC) space, attackers used to have to conduct weeks of reconnaissance to identify suitable targets and payment processes. Now, with AI analyzing LinkedIn, public financial reports and acquisition announcements, the same analysis can be done in a matter of days. This allows an attack team to run campaigns against hundreds of organizations simultaneously.

3.3 Defense ROI and Cost Variance

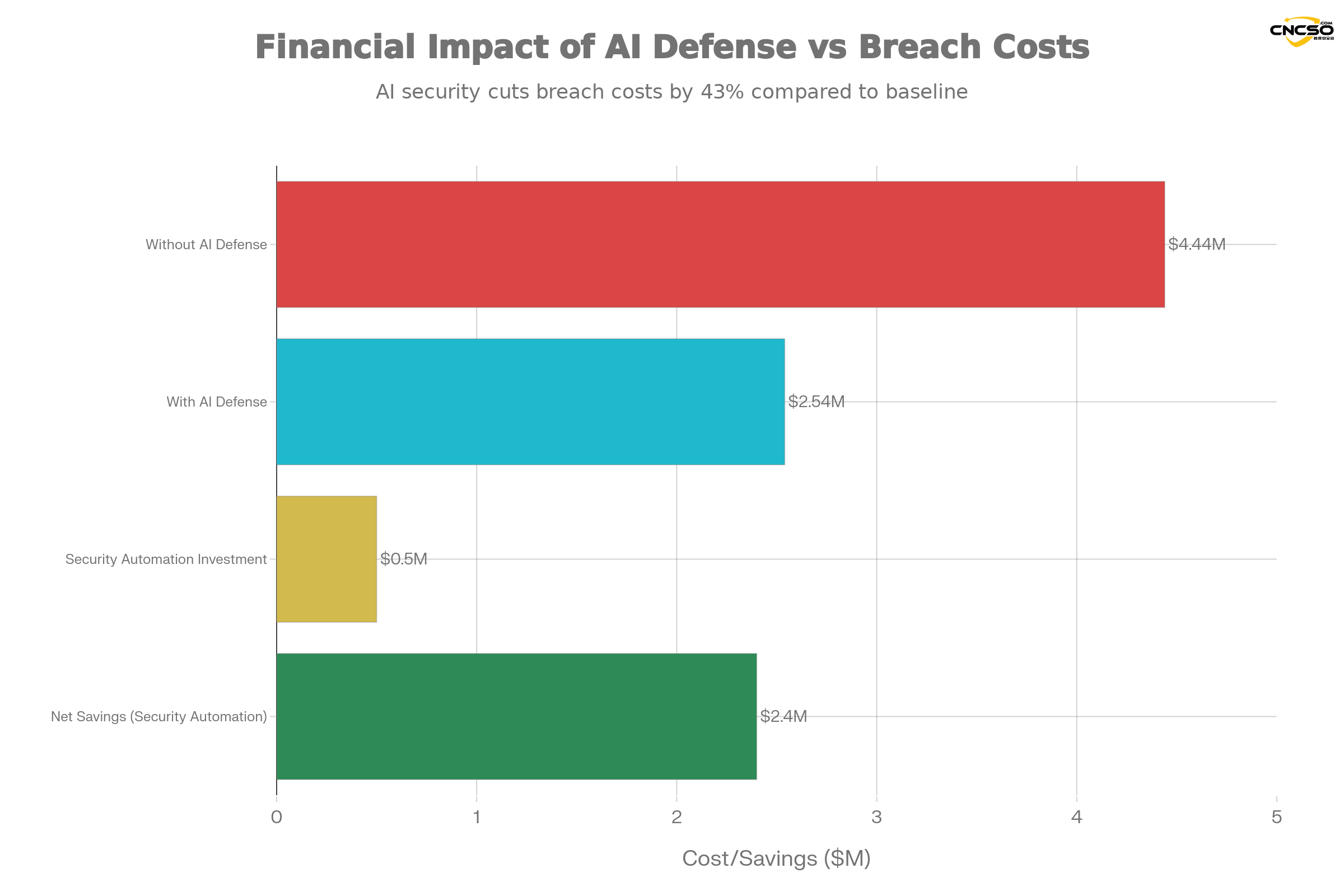

Contrast this with the cost advantage for defenders. Organizations using AI defenses save an average of.

-

Save $1.9 million per leak(versus organizations that do not use AI defense)

-

Reduction in testing time by an average of 80 days

-

Leakage life cycle reduced to 241 days(Shortest in 9 years)

-

Cost per record reduced from $234 to $128(AI automated detection)

This creates a paradox: AI brings down the cost of attacking dramatically, but it also brings down the cost of effective defense much faster. The key difference is that defenders can invest once, against all attacks, while attackers must customize for each target.

IV. Most affected industries and regions

4.1 Risk assessment by industry

Financial services (highest risk)

The financial services industry faces the most AI-powered cyberattacks. Deep example survey shows:

-

45%'s financial services organizationAI-driven cyberattacks in the last 12 months

-

12.81 TP3T for B2B finance companiesEncountering ransomware

-

1,338,357 Banking Trojan Attacksdetected

-

Average leakage costs in the financial sector continue to remain in the range of $4.8-$5.0M

Financial institutions are particularly vulnerable to AI-driven BEC attacks because:

-

Transaction authorization processes are often conducted under time pressure

-

Large transfers are a common business practice

-

Attackers can reap huge direct financial rewards

Health care (high mortality rate)

Healthcare faces multiple threats:

-

1,710 security incidents(Verizon DBIR 2025)

-

1,542 confirmed data breaches

-

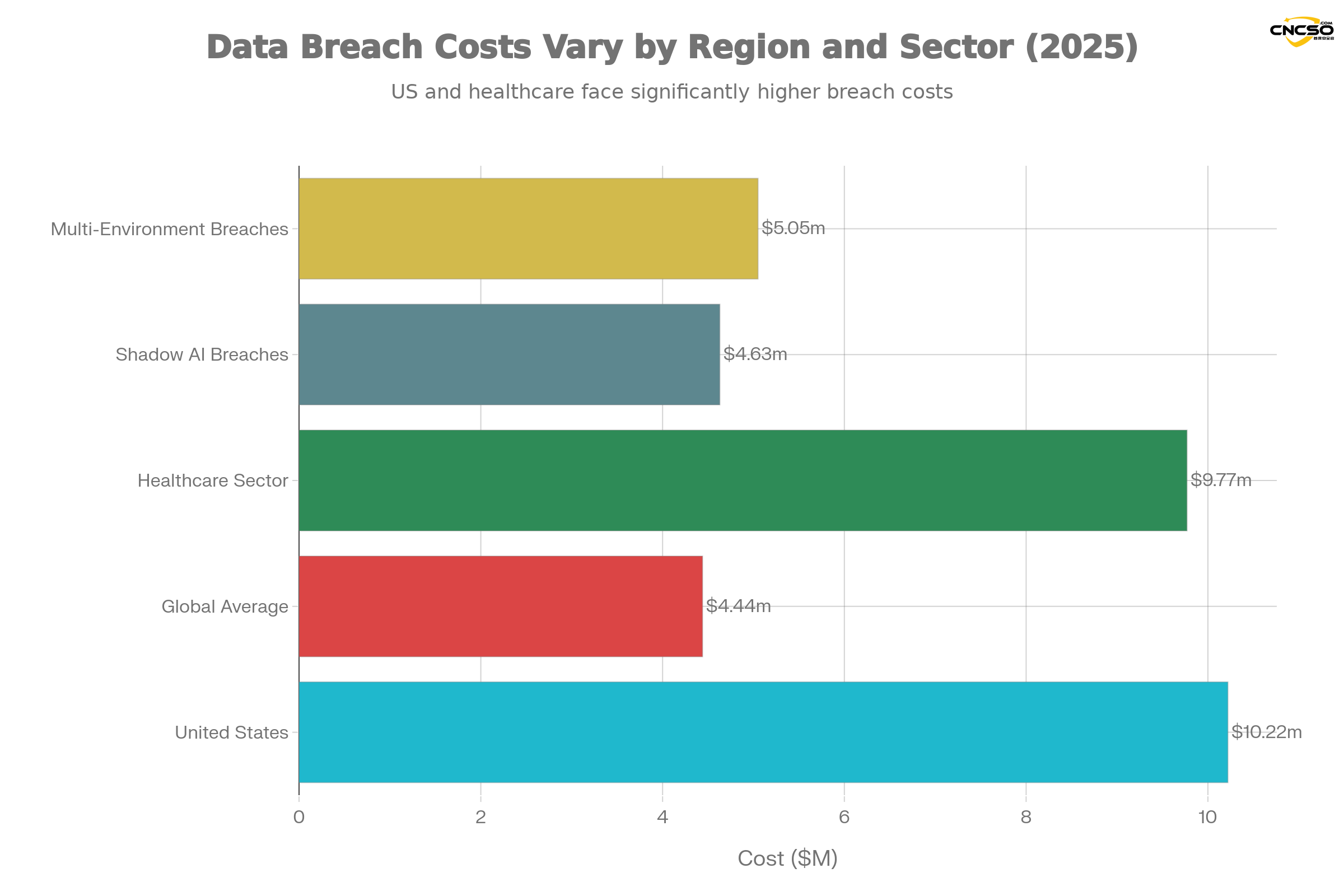

Average leakage cost $9.77M(highest in the industry)

-

Phishing Attacks Surge on 442%(second half of 2024)

-

Major threats come from ransomware, identity attacks and AI-driven deep forgeries

The healthcare industry is a high-value target because:

-

Patient data has lasting black market value ($150-$300 per medical record)

-

Operational disruptions lead to life safety risks, prompting quick ransom payments

-

Strict HIPAA Compliance Requirements Increase Breach Costs

-

Ransomware Gangs Know Healthcare Organizations Are Subject to Strong Payment Dynamics

Educational institutions (high growth threat)

The education sector is becoming the new frontier for ransomware:

-

217 ransomware attacks(April 2023-April 2024)

-

Year-on-year growth of 35% or more

-

U.S. Accounts for 56% of Known Attacks Worldwide

-

Education was the fourth most affected sector globally, after manufacturing

Educational institutions are particularly vulnerable because:

-

Limited IT resources and budget

-

High value of student data

-

Operational disruptions have a serious impact on the school year

-

Open network environment for easy initial access

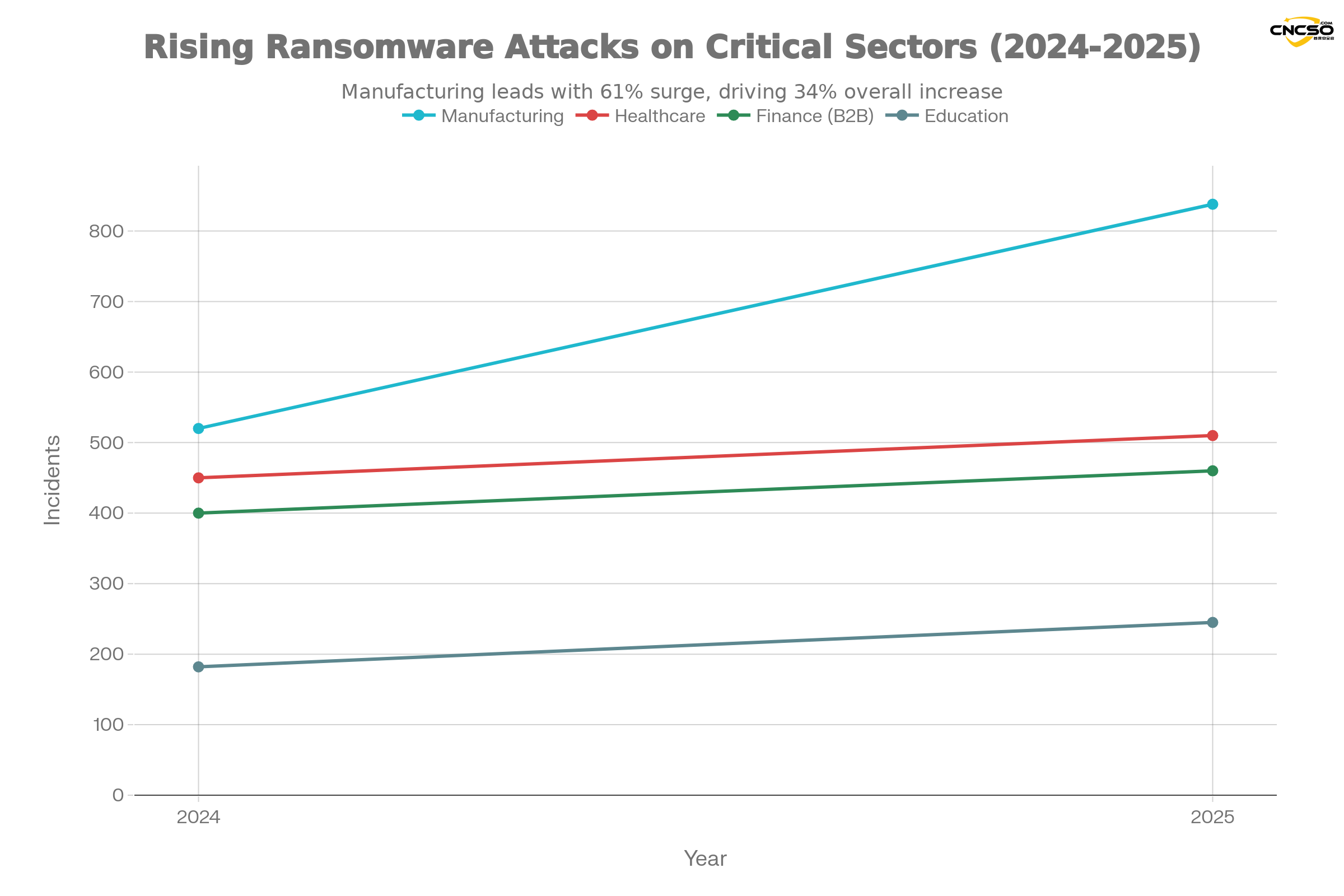

Manufacturing (fastest growing)

Manufacturing is the fastest growing target for ransomware in 2025:

-

838 ransomware attacks(61% compared to 520)

-

34% growth in global ransomware incidentsTargeting key sectors

-

Highly digitized and interconnected systems make them vulnerable to attack

-

Convergence of operational technology (OT) and IT systems increases exposure

4.2 Area risk analysis

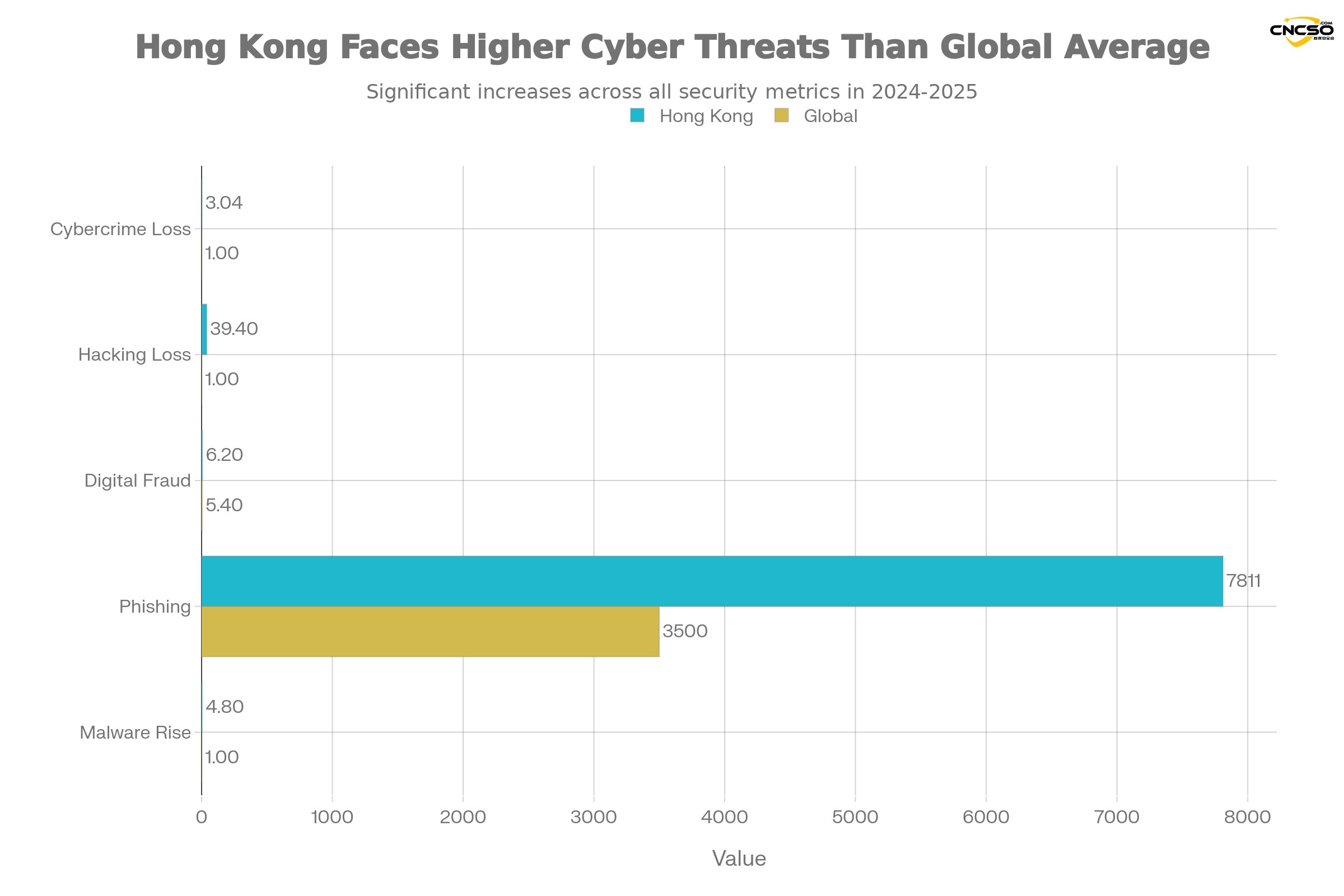

Hong Kong Special Administrative Region

Hong Kong faces a significantly higher level of cyber threats than the global average:

| norm | 2024-2025 data | global comparison |

|---|---|---|

| Cybercrime losses (H1 2025) | HK$3.04B (+15% YoY) | +15% Growth |

| Hacking losses (H1 2025) | HK$39.4M (+10x) | 10-fold surge |

| Digital fraud rate | 6.2% | Global average 5.41 TP3T, high 151 TP3T |

| Retail sector fraud | 17.8% | Year-on-year growth of 113% |

| Number of phishing incidents | 7,811 (+108%) | Worst in 5 years |

| malware incident | 4.8-fold increase | rise significantly |

| phishing link | 48,000+ (+150%) | spike |

The Cyber Security and Technology Crime Division of the Hong Kong Police Force said that while the number of hacking attacks had dropped slightly, the huge rise in financial losses indicated that the threat was escalating.HKCERT handled 12,536 security incidents in 2024.

Rest of Asia-Pacific

According to the 2025 Thales Data Threat Report, the Asia-Pacific region (including Australia, India, Japan, New Zealand, Singapore, South Korea and Hong Kong) faces:

-

Organizations above 65%Seeing rapid AI growth as a top security concern

-

63% Concerned about AI's lack of integrity

-

55% Worried about AI's untrustworthiness

-

47%'s Asia Pacific EnterprisesExperiencing a data breach

United States (highest cost)

The U.S. suffered the highest cost per breach:

-

Average US leakage cost: $10.22M(Record high, +9%)

-

This is more than 130% above the global average of $4.44M

-

Regulatory fines and slower testing times drive up costs

-

Multiple major breaches involving millions of records in 2025

V. Typical Cases and Insights

5.1 Hong Kong Deep Counterfeiting Fraud Case

Case overview

In 2025, a Hong Kong financial firm lost $25 million in an AI-powered deep forgery scam. Attackers used highly realistic deep-fake video and audio to impersonate the CFO and request urgent fund transfers.

attack process

-

detection phase: Attackers used open-source AI tools to analyze LinkedIn, company websites and annual reports to create detailed profiles of executives

-

Deep Fake Creation: Created a video clone of the CFO using open-source deep forgery tools such as DeepFaceLab

-

initial contact: Fake conference invitations sent via phishing emails containing malicious links

-

social engineering: In-depth forged video shared via internal communications app, requiring transfers to be made prior to board authorization

-

Funds transfer: Deceived treasurers authorized transfers under time pressure

Key Takeaways

-

time-pressure tactics: Attackers capitalize on the urgency of emergency board meetings

-

Multi-channel validation is missing: The organization has not implemented an out-of-band validation process

-

Democratizing AI Tools: Open source deep forgery tool dramatically lowers technical barriers

-

Abuse of internal trust: The natural trust of employees in messages from "management".

5.2 Energy company CEO voice fraud (2019, but becoming common in 2025)

Case Review

While this case occurred in 2019, a similar attack becomes systematic in 2025: the treasurer of the US subsidiary of a European energy company receives a call from what appears to be the CEO of the parent company requesting an emergency transfer of €220,000 for a secret acquisition.The voice on the phone is identical - but it's a deep voice forgery.

Evolution in 2025

In 2025, these types of attacks have turned into large-scale operations:

-

Attackers now use machine learning models to simulate the voice and speech patterns of specific executives

-

Attacks can take place in real time, rather than being pre-recorded, making detection more difficult

-

Deep multilingual forgery becomes feasible, expanding geographic coverage

-

Organizations Report Difficulty Distinguishing Real Calls from Deep Fake Calls

5.3 Fortune 500 AI Proxy Data Breach (March 2025)

Case Description

In March 2025, a Fortune 500 financial services company discovered that its customer service AI agents had been compromising sensitive account data for weeks. The root cause was a well-planned prompt injection attack.

Attack Mechanisms

The attacker presented the AI agent with a message disguised as a client query containing hidden instructions. These instructions rewrote the agent's system prompts, disabled security filters, and caused the agent to execute:

-

Leak confidential business intelligence to external endpoints

-

Modify its own system prompts to add persistent access

-

Execute API calls with elevated privileges that exceed the user's authorization scope

-

Access to unauthorized databases

Scale and impact

-

Unknown amount of customer data compromised

-

Leaks go undetected for weeks

-

Millions of dollars in regulatory fines against companies

-

Need to replace the entire AI agent architecture

Key Takeaways

-

Cue injections are high impact attack vectors: traditional security controls are ineffective against them

-

AI agents are a new type of privileged user: they can access and modify the system with little oversight

-

Input-based pollution persistence: Malicious hints stored in the database are automatically executed when processed by the agent

-

Lack of observability: The organization did not audit the conduct of the AI agent

5.4 Google and Facebook vendor fraud ($100M+)

Case background

While this case spanned many years, it turned into a template in 2025. A fraudster profited over $$100M by sending fake invoices to Google and Facebook (posing as their real hardware vendors). which worked because:

-

Email formatting exactly matches the real supplier

-

Requests are aligned with the true procurement cycle

-

No secondary validation of fund transfers was implemented

Upgraded version for 2025

In 2025, this attack has evolved:

-

Use real compromised vendor email accounts (not spoofs)

-

AI-generated invoices that are fully compliant with the vendor's historical formatting

-

Payment against new purchase orders to make it look like a real business operation

-

Vendor Email Compromise Attacks Increase by 137% in 2024

VI. Capital Loss Analysis of Artificial Intelligence-Induced Security Breaches

6.1 Costs of Shadow AI

Definition and scale

Shadow AI is unauthorized AI tools and applications used by employees without IT approval or oversight. It is a significant emerging cost driver for 2025.

economic impact

According to IBM's Cost of a Data Breach 2025 report:

-

Shadow AI Leak Average Cost: $4.63M

-

$670,000** more than standard event

-

Longer detection time:: 247 days vs. 241 days standard

-

Wider data exposure:: 62% Data involving multiple environments

-

Organizations can't track or control which AI tools sensitive data is shared to

root cause

-

lack of visibility:: 83%'s organization lacks basic controls to prevent data exposure to AI tools

-

classification crisis:: Organizations are unable to track what data is classified as sensitive

-

Employees bypass: Employees are using AI tools to increase productivity while circumventing IT security processes

-

Compliance Vacuum: No policy or governance framework for enterprise AI use

6.2 Capital Loss Costs of Cloud Misconfiguration

Current status of statistics

-

Leakage of 15%Starting with Cloud Misconfiguration (the third most important attack vector)

-

Cloud Security Incident for 23%Derived directly from misconfiguration

-

Enterprises with 27%Report suffers public cloud breach

-

Gartner predicts 99% of cloud failures by 2025Will stem from customer error (not cloud provider defects)

-

All leaks of 26%Caused by human error

cost difference

| storage location | Average leakage cost |

|---|---|

| Multi-environment storage | $5.05 million |

| private cloud | $4.68 million |

| public cloud | $4.18 million |

| local data | $401 million |

Multi-environmental leaks cost the most because:

-

Longer detection time (276 days vs. 241 days)

-

Need for coordinated response across environments

-

Increased complexity of compliance assessment

-

Increased third-party notification obligations

6.3 The Cost of AI Infrastructure Vulnerabilities

zero-day and open-source vulnerabilities

The exposed AI infrastructure involves not only misconfigurations but also unpatched vulnerabilities in core components:

-

ChromaDB Zero Day: Allow unauthenticated reads and writes

-

Redis Post-Use Vulnerability: Causing data corruption in vector storage

-

NVIDIA Triton Inference Server Vulnerability: Allow arbitrary data loading

-

NVIDIA Container Toolkit Vulnerability: Initialize external variables

The cost of developing these vulnerabilities is:

-

Time from discovery to exploitation of each vulnerability: Reduction from weeks (traditional software) to days (AI tools)

-

Leveraging scale: A single vulnerability can affect thousands of exposed servers

-

cascade effect: A single compromised AI service can lead to the compromise of an entire system

6.4 Capital Losses in Vector Database Poisoning

Attack Mechanisms

Vector databases (e.g., Chroma) store embeddings and documents retrieved by AI systems. Poisoning these databases can result:

-

The Retrieval Augmentation Generation (RAG) system returns incorrect information

-

AI agents make decisions based on faulty data

-

Difficult to detect persistent attacks (looks like normal operation)

costing

-

Costs of bad decisions due to poisoned databases: unquantified but potentially large (e.g., FAI makes bad investment decisions)

-

Remediation costs: retrain the model, restore from backup (usually offline)

-

Reputation costs: The public case for AI systems producing biased or incorrect outputs

-

Regulatory costs: Data quality issues violate data governance provisions

VII. Guidelines for key defense actions in 2025

7.1 Identity and Access Defense (priority: highest)

Implement password-free, phishing-resistant multi-factor authentication

Standard MFAs (SMS codes, e-mail links) have proven inadequate against modern attacks. According to NIST SP 800-63-4, organizations should implement:

Option 1: FIDO2/WebAuthn hard key

-

Use of hardware keys (USB or Bluetooth) as authenticator

-

Keys are protected by encryption and never leave the device

-

Prevent credential replay and man-in-the-middle attacks

-

Cost: $15-$50/year per user

Option 2: Built-in biometrics

-

Using Windows Hello, Face ID or Touch ID

-

Private key stored in chip, not exportable

-

Federation with identity providers (through OIDC/SAML)

-

Cost: included in existing equipment

Implementation parameters

-

financial department: All accounts 100% use passwordless MFAs

-

IT administrator: Password-free MFA for all privileged accounts

-

remote access: VPNs and cloud applications must use passwordless MFAs

-

callback validation: any funds transfer must be verified twice by a known phone number (not using the phone number in the message)

Implementation costs

| assemblies | (manufacturing, production etc) costs |

|---|---|

| Hard keys (5,000 users) | $75,000 |

| MFA Management Solutions | $50,000 |

| Deployment and training | $30,000 |

| Total annual cost | $155,000 |

ROI

-

Average leakage cost: $$444 million

-

Reduced risk of credential theft for 70%: $3.1M savings for $

-

ROI in 1 year: 20x

7.2 AI infrastructure hardening (priority: highest)

Inventory and categorize all AI systems

-

Create an AI Bill of Materials (BOM) listing all models, data sources, vector databases, and inference endpoints

-

Identify deployment location (local, AWS, Azure, Google Cloud, etc.)

-

Marking data sensitivity (public, internal, restricted, confidential)

-

Document dependencies and integrations with each AI system

Locking down exposed AI components

Immediate Action (Week 1):

-

Scanning all Internet-oriented Chroma, Redis, Ollama instances

-

Use of Shodan, Censys or internal vulnerability scanners

-

Cost: $0-$5,000 (if scanning tool is purchased)

-

-

Enable authentication and encryption

-

Add a strong password or API key to all vector databases

-

Enable TLS 1.3 encryption

-

Implementation of Role-Based Access Control (RBAC)

-

-

Enabling Audit Logging

-

Record all data read and write operations

-

Send logs to SIEM system for anomaly detection

-

Cost: $10,000-$20,000 (SIEM configuration)

-

Mid-term action (weeks 2-4):

-

Turn off unwanted Internet access

-

Moving AI services behind private subnets

-

Access using a VPN or bastion host

-

Implementation of network segmentation

-

-

Apply security patches

-

Apply the latest patches for ChromaDB, NVIDIA Triton, and more!

-

Establish a patch management process

-

Cost: $5,000-$15,000 (engineering time)

-

-

Implementation of runtime monitoring

-

Detecting Anomalous Patterns Using Behavioral Analysis

-

Monitor model output for signs of data poisoning

-

Cost: $20,000-$50,000 (tools + configuration)

-

Long-term action (months 2-3):

-

Establishment of CI/CD security

-

Supply Chain Security Scanning in the Model Training Pipeline

-

Dependency Version Control and Vulnerability Scanning

-

-

realizeZero Trust Architecture

-

Mutual TLS authentication between all AI components

-

Separate identities and short-term certificates for each service

-

Cost: $50,000-$100,000 (tools and engineering)

-

7.3 Agent AI governance framework (priority: high)

Scale of the problem

-

69% of enterprises deployed AI agents

-

Only 21% has adequate security controls

-

79% Lack of a formal AI agent governance policy

-

20% has no controls at all

Key components of the governance framework

1. List and classification of AI agents

Proxy list entry:

- Agent name and purpose

- Systems and data it accesses

- Permissions granted (create, delete, modify, read)

- Owner and emergency contact information

- Risk level (low, medium, high, critical)

- Deployment loop period (development, test, production)

- Audit date

2. Privileges and the principle of least privilege for guards

-

Agents can only access the data and systems necessary for their functioning

-

No access to global or elevated privilege APIs

-

Manual approval of sensitive operations (data deletion, permission changes, fund transfers)

-

Rigorous workflow governance

3. Behavioral monitoring and anomaly detection

-

Establish baselines for normal agent activity (data access patterns, API calls, execution times)

-

Real-time detection of deviations from the baseline

-

Automatic isolation of agents displaying anomalies

-

Audit all agent operations for forensics

4. Cue injection defense

-

Don't trust user input - clean up all external inputs

-

Security review of user queries prior to execution

-

Use strict input validation and type checking

-

Add a "not jailbreakable" command before the system prompt (although this is not a complete solution)

-

Separating agent commands from user data

5. Governance tools and frameworks

-

NIST AI Risk Management Framework (AI RMF):: Four core functions (governance, map, measurement, management)

-

ISO 42001: AI Management System

-

OWASP LLMRISK TOP 10: LLM Specific Vulnerabilities

-

Regular red team testing to check for proxy jailbreaks and abuse

7.4 Data classification and shading AI control (priority: high)

Implementation of enterprise data classification

According to IBM data, 83% organizations lack basic controls to prevent data exposure to AI tools. Solution:

| classification level | define | AI tool usage | typical example |

|---|---|---|---|

| openly | Can be shared publicly | limitless | Marketing copy, published documents |

| inside (part, section) | For internal use only | Approved tools only | Meeting notes, internal communications |

| secrets | Trade secrets or competitive | Disable all AI tools | Financial data, product roadmap |

| restricted | Personal or regulatory data | Prohibition -- legal risks | PII, PHI, payment information |

Shadow AI Control

Implement technical measures to prevent the use of unapproved AI tools:

-

Data Loss Prevention (DLP) - Block restricted data when trying to copy/paste to AI tools

-

browser quarantine - Running untrusted websites in isolated containers

-

API Gateway - Blocking traffic to public AI APIs like OpenAI, Claude, and others

-

SaaS governance tools - Detecting departmental use of unapproved AI SaaS applications

-

Staff Training - Explain why certain data cannot be processed for AI

Implementation costs

| containment | (manufacturing, production etc) costs |

|---|---|

| DLP Tools | $40,000-$80,000/year |

| browser quarantine | $30,000-$60,000/year |

| SaaS Management | $20,000-$40,000/year |

| Staff Training | $5,000-$10,000 |

| (grand) total | $95,000-$190,000/year |

ROI

-

Shadow AI Leak Cost: $4.63M

-

Reduced risk of shaded AI use at 60%: $ 2.78M savings

-

Payback period: less than 1 year

7.5 Secure automation and AI-driven detection (priority: highest)

Business Cases

Organizational implementations using security automation and AI:

-

Manual Safety Operating Cost Reduction for 60-80%

-

Average annual savings of $2.4 million(Enterprise implementation)

-

10x faster incident response time(from 4 hours to 15 minutes)

-

Reduced testing time by 80 days

-

5-fold increase in security incident handling capacity

Key implementation areas

1. SIEM + SOAR integration

-

Use SIEM to aggregate all security logs and events

-

Automating Common Response Workflows with SOAR

-

Cost: $100,000-$200,000 implementation + $50,000-$100,000/year maintenance

-

ROI: Reduced detection time means average savings of $1.9M/leakage

2. Behavioral analysis and anomaly detection

-

Establish baseline behavior for users and entities

-

Using machine learning to detect activities that deviate from the baseline

-

Automatic quarantine of suspicious accounts

-

Cost: $30,000-$60,000/year

3. Threat intelligence automation

-

Automatic association of known malicious IPs, domains, file hashes

-

Proactive Threat Blocking Indicators

-

Integration with external threat sources

-

Cost: $20,000-$40,000/year

4. Compliance automation

-

automated reporting

-

Continuous Control Verification

-

Cost: $15,000-$30,000/year

7.6 Finance department specific controls (priority: critical)

According to the data in this report, the financial industry is a major target for AI-driven BEC. Specific defenses:

Control lists:

| containment | Implementation modalities | (manufacturing, production etc) costs |

|---|---|---|

| Anti-Fishing MFA | All financial accounts 100% FIDO2 | $75,000 Deployment |

| callback validation | Mandatory second factor for transfer of funds | Process changes (at no additional cost) |

| Payment Approval Workflow | Multi-level authorization, amount thresholds | $20,000-$40,000 Tools |

| Supplier validation | DNS/DMARC check, third-party validation | $10,000-$20,000 Tools |

| Deep forgery detection | Video/Audio Authenticity Checker | $30,000-$50,000/year |

| Regular drills | Security Awareness Test to Simulate BEC Attacks | $10,000-$20,000/year |

Cost-effectiveness of risk mitigation:

-

Average BEC cost: $200,000-$500,000 per event

-

Defenses to stop 99% attacks

-

One successful stop pays for a year's worth of controls.

VIII. Summary of classic issues

Question 1: How many organizations report that AI is changing their threat exposure?

solution

Major vendor and agency reports describe AI as a significant factor in both attack and defense in 2025, growing at a rapid pace:

-

93%'s Security Leaders Expect Daily AI Attacks by 2025

-

89% Sees AI-Driven Protection as Critical to Closing the Defense Gap

-

76% reports AI attacks outpacing their defenses in speed and sophistication

-

Business leaders in 86% report at least one AI-related incident in the past 12 months

Question 2: Is AI deep faking really a major driver for BEC?

solution

Yes. The 2025 alert covers voice and text impersonation with realistic content. Organizations must:

-

Out-of-band validation of any request for transfer of funds or granting of access rights

-

Callback using a known phone number never received in a message

-

Implementing deep forgery detection tools for video verification

-

Increased requirements for approval of "urgent" funding requests

Deep forgeries now account for 6.51 TP3T of all fraud attacks, an increase of 2,1371 TP3T from 2022.

Question 3: Is exposed AI infrastructure really common?

solution

YES. Industry scans show hundreds of unprotected AI data stores and endpoints, often deployed as containerized and accessible on the public web:

-

200+ fully unprotected Chroma servers that can read/write data without authentication

-

More than 10,000 instances of Ollama exposed on the Internet

-

2,000 Redis servers unprotected

-

Many additional servers exposed but partially protected

-

These are misconfiguration issues, not zero-day issues - all should be fixed immediately!

Question 4: Does AI really reduce the cost of compromise for defenders?

solution

According to the IBM Cost of a Data Breach 2025 report, organizations using security AI and automation typically report lower average breach costs than their peers:

-

Organizations Using AI Tools Reduce Leak Lifecycle by 80 Days

-

Savings average $1.9 million

-

For multiple environmental leaks (highest cost), savings are even higher

-

Third-party analysis of the same dataset emphasizes the use of curve acceleration, with more companies reporting at least limited use of AI for detection and response

However, this is only valid for organizations that have deployed and are operating the tool correctly. The adoption curve is still in its early stages.

Question 5: What individual control should the finance department implement this quarter?

solution

The two-step rule:

-

Anti-phishing MFA for all financial accounts: Use FIDO2/WebAuthn, not SMS or email codes!

-

Mandatory callback validation for any payment or bank detail changes: Use a phone number from a known number stored in the employee's file, never the number received in the message

These controls dramatically reduce the likelihood of BEC success and are the most cost-effective way to block the most common initial access vectors.

Question 6: What vulnerability trends authoritative annual benchmark references?

solution

Start with these and then cross-check for sectoral nuances:

-

Verizon DBIR 2025 - Maximum evidence base for the event model

-

IBM Cost Data Breach Report 2025 - Cost and root cause analysis

-

CrowdStrike2025 Ransomware Investigation - Especially ransomware and AI-accelerated attacks

-

ENISA Threatening Landscapes 2025 - European perspective and emerging threats

-

Microsoft Digital Defense Report 2025 - Global view of counterparties and defenses

-

Trend Micro AI securityStatus report (first half of 2025) - AI infrastructure and agent security

IX. Industry references and gap analysis

9.1 Benchmarking of defense frameworks

Many organizations use maturity models and frameworks to assess their security posture. This analysis compares how leading frameworks handle AI security:

| Framework/standards | AI coverage | proxy governance | Cue Injection | suitability |

|---|---|---|---|---|

| NIST AI RMF | Comprehensive (Govern/Map/Measure/Manage) | expository | contain in a concealed form | your (honorific) |

| ISO 27001 | Limited (traditional information security) | not have | not have | lower (one's head) |

| ISO 42001 | Specialized AI management (added in 2024) | expository | expository | your (honorific) |

| CIS control | Limited (mainly infrastructure) | not have | not have | lower (one's head) |

| NIST Cybersecurity Framework v2.0 | Partially (AI considerations have been added) | portion | portion | moderate |

| PCI DSS 4.0 | Limited (only in the context of data protection) | not have | not have | lower (one's head) |

in conclusion: NIST AI RMF and ISO 42001 are the most applicable frameworks for AI security maturity assessment and implementation.

9.2 Defense Tools Landscape

SIEM/SOAR platform (event detection and response)

| offerings | AI detection capabilities | AI Agent Support | MCP Security | (manufacturing, production etc) costs |

|---|---|---|---|---|

| Splunk Enterprise Security | Strong (machine learning anomaly detection) | portion | not have | $150K-$500K/year |

| Microsoft Sentinel | Strong (Azure ML integration) | portion | not have | $50K-$300K/year |

| CrowdStrike Falcon XDR | Strong (behavioral analysis) | portion | not have | $200K-$600K/year |

| Datadog | moderate | not have | not have | $30K-$200K/year |

| IBM QRadar | moderate | not have | not have | $100K-$400K/year |

AI infrastructure security (container/vector database protection)

| offerings | Chroma Protection | Ollama Protection | Redis Protection | MCP Support |

|---|---|---|---|---|

| Wiz | yes | yes | yes | yes |

| Snyk | no | no | no | no |

| Aqua Security | portion | portion | portion | no |

| Trend Micro | yes | yes | yes | yes |

| Palo Alto Networks | portion | portion | yes | no |

Agent AI Security

| offerings | behavioral monitoring | Cue Injection Detection | Workflow governance | maturity level |

|---|---|---|---|---|

| Obsidian Security | yes | yes | yes | your (honorific) |

| Lakera | yes | yes | no | moderate |

| Rebuff | yes | yes | no | moderate |

| Robust Intelligence | yes | yes | no | moderate |

| Customizing SOAR workflows | portion | no | yes | lower (one's head) |

9.3 Internal capacity gaps

Actual state vs. desired state of a typical business:

| abilities | current state | desired state | gaps | Bridging costs |

|---|---|---|---|---|

| Inventory of AI infrastructure | 29% has a complete list | 100% | 71% | $50-$100K |

| AI Agent Governance Policy | 21% has a formal policy | 100% | 79% | $30-$50K (strategy) + $50-$100K (tools) |

| Cue Injection Defense | 5% implemented | 95% | 90% | $100-$200K (tools + improvements) |

| MFA Deployment (Finance) | 45% Use of anti-fishing MFAs | 100% | 55% | $75-$150K |

| AI Tools DLP | 18% has been implemented | 80% | 62% | $95-$190K |

| SIEM/AI integration | 35% has some kind of AI detection | 90% | 55% | $150-$300K |

| safety automation | 40% has a basic SOAR | 85% | 45% | $100-$200K implementation + $50-$100K/year |

Total gap closing costs: $600K-$1.2M initial + $100-$200K/year operating costs

X. Conclusion

10.1 Key findings

In 2025, AI has become a double-edged sword for cybersecurity posture. Key findings include:

-

Threat growth outpaces defense: While AI-driven defenses are improving, AI-driven attacks are growing and evolving even faster.761 TP3T's organizational reporting cannot match the speed of the attacks.

-

AI infrastructure is the new attack surface: Thousands of exposed AI systems (vector databases, inference servers, model repositories) create direct attack paths. These are not advanced threats - they are basic security hygiene issues.

-

Shadow AI is the new cause of data breaches: Uncontrolled use of AI tools leads to an additional $67 million/compromise. Most organizations lack basic controls.

-

Agent AI is the new privileged user: AI agents now have more system access than most human employees. Most organizations do not have a proper governance framework.

-

Deep forgery is now commercialized: Voice and video cloning has evolved from expensive customized attacks to relatively inexpensive large-scale operations. with major fraud occurring on an annual basis in 2025.

-

Defense ROI is clear: Organizations using AI defense save an average of $1.9M/leak. Organizations that invest early win in both cost and response time.

-

Significant regional differences: Hong Kong and other Asia-Pacific regions face higher-than-global-average threats. In particular, the financial center faces targeted BEC and deep counterfeiting activities.

10.2 Priority action items for decision makers

Immediate (days 0-30)

-

Inventory and categorize all AI systems

-

Deploy anti-phishing MFA to all accounts in the financial sector

-

Scanning the Internet-oriented vector database and adding authentication

-

Enable callback validation for financial fund transfers

Cost: $75K-$150K | ROI: Block Average $200K+ BEC

Short-term (days 30-90)

-

Establishment of AI agent governance framework and inventory

-

Implementing Enterprise Data Classification and Shadow AI DLP

-

Deploy behavioral analytics to detect anomalies

-

Perform security audits of all deployed AI systems

Cost: $150K-$300K | ROI: Reduced risk of shadow AI leakage $278M+

Mid-term (days 90-180)

-

Implementing SIEM + SOAR integration for AI-driven detection

-

Deploying Prompt Injection and Vector Poisoning Defense

-

Create security automation for faster response

-

Perform red team testing of the proxy system

Cost: $200K-$400K | ROI: 80 days shorter testing time, saving $1.9M/leakage

Long-term (days 180-365)

-

Complete Zero Trust Architecture Implementation

-

Establishing a secure SDLC for AI development and deployment

-

Establish continuous agent security monitoring

-

Developing an organization-specific AI security culture

Cost: $300K-$600K | ROI: Build in resilience to prevent high-cost events

10.3 Special recommendations for the Asia-Pacific region

In view of the specific threat scenario in Hong Kong, local organizations should pay particular attention:

-

BEC Defense for Financial Institutions: Strict payment governance should be implemented in the financial sector as a result of large transfers and international transactions

-

Retail Industry Fraud Detection: The retail sector has a fraud rate of 17.81 TP3T (+1131 TP3T YoY) and should prioritize investments in fraud detection tools

-

Cross-border threat intelligence: Hong Kong's geographic location makes it vulnerable to targeting by specific threat groups in the Asia-Pacific region. Share threat intelligence with regional CERTs and law enforcement agencies

-

regulatory compliance: Hong Kong's data protection regulations and increasing international compliance requirements make proper data categorization and protection in cloud and AI systems critical

-

Supply chain resilience: Given the extensive AI integration in the global supply chain, organizations should assess the security of third-party AI tools and services

10.4 Final Recommendations

rightchief security officer(CSOof the United Nations

-

Reassessment of risk appetite: The speed and scale of the AI threat has changed. Traditional dispassionate assessment cycles are too slow. Create a framework for rapid decision-making.

-

Investing in observables rather than instruments: Rather than acquiring the latest tools, the focus is on observables that can detect anomalies and track the behavior of AI systems.

-

Building AI security expertise: Most organizations have deep expertise in traditional cybersecurity but lack expertise in AI-specific threats. Prioritize recruiting or training AI security experts.

-

Implementation of proxy governance as a priority: Establish a governance framework before deploying agent AI at scale. Governance after the fact costs 10 times more than governance before the fact.

Recommendations for operational leadership

-

AI security is a business riskCybersecurity is not an "IT problem" - it's a business continuity risk. Integrate AI security into enterprise risk management.

-

Prevention is much cheaper than fixing: $4.44M cost per leak. The ROI of the defense investment is clear - average $2.2M savings per leak.

-

Shadow AI is a compliance risk: Unregulated use of AI tools may violate data privacy regulations (GDPR, CCPA, etc.). Governance framework in place to control risks.

-

Vendor risk has expanded: Any AI tool, cloud service or third-party module introduces a new dimension of risk. Implement rigorous third-party security assessments.

10.5 Prospects

By 2026, we can expect:

-

AI-driven attacks will continue to accelerate, especially in the area of proxy AI

-

Defense technology will mature, it will become more common for organizations to adopt AI tools for defense

-

Regulatory framework will follow, AI security governance using ISO 42001 and NIST AI RMF will become mandatory

-

Agent AI security will be a major focusThe majority of vulnerabilities and incidents will involve misconfigured or compromised agents.

-

Deep Counterfeiting Fraud to RiseUntil biometrics and multi-factor authentication become commonplace

The key is to act now. Those organizations that invest in AI security today will be in a position to have a competitive advantage next year, with faster threat detection, quicker response, and less cost and reputational damage.

Literature Reference

-

Verizon Data Breach Investigations Report (DBIR) 2025 - Largest Database of Incident and Breach Data

-

IBM Cost of a Data Breach Report 2025 - Global $444M Average Breach Cost, Analyzed by Industry and Region

-

CrowdStrike 2025 State of Ransomware Report - 76%'s Organization Cannot Match AI Attack Speed

-

Microsoft Digital Defense Report 2025 - National Level Threat and Defense Capabilities

-

Trend Micro State of AI Security Report 1H 2025 - 10,000+ Exposed Ollama Instances, 200+ Unprotected Chroma Servers

-

ENISA Threat Landscape 2025 - European Threat Perspective

-

World Economic Forum Global Cybersecurity Outlook 2025 - 93%'s Security Leaders Expect Daily AI Attacks

-

Kaspersky Financial Sector Report 2025 - 12.8% of B2B finance facing ransomware

-

KELA Escalating Ransomware Report 2025 - 34% Global Key Sector Growth, Manufacturing Growth 61%

-

HKCERT Hong Kong Cyber Security Outlook 2025 - 7,811 phishing cases (+108%), malware incidents +4.8x

-

NIST Artificial Intelligence Risk Management Framework (AI RMF) - An Implementation Framework for Enterprise AI Security

-

ISO 42001 - Standard for AI Management Systems

-

OWASP LLM Risk TOP 10 - LLM Specific Vulnerability Categories

-

McKinsey Deploying Agentic AI with Safety and Security - Best Practices in Agentic Governance

-

Obsidian Security Agentic AI Security Report - 69% Enterprises Deploy AI Agents, Only 21% Have Security Controls

-

DeepStrike AI Cyber Attack Statistics 2025 - 72% growth, $30B projected damage

-

KnowBe4 2025 Phishing Trends Report - 83% of Phishing Emails Using AI

-

Cysure 2025 BEC Report - Google/Facebook $100M+ Vendor Fraud Case

-

Deep Instinct Financial Services AI Cyberattack Survey - 45% Facing AI Attacks

-

NIST SP 800-63-4 - Standard for Authentication and Lifecycle Management, Including Passwordless MFAs

-

Hong Kong Police Cybersecurity Crime Statistics (H1 2025) - HK$3.04B loss, +15% YoY

-

Thales 2025 Data Threat Report APAC Edition - 65% Enterprises See AI as a Top Security Concern

-

Future CISO Hong Kong 2025 Cyber Defence - 6.2% Digital Fraud Rate

Original article by Chief Security Officer, if reproduced, please credit https://www.cncso.com/en/2025-ai-cyber-attack-trends-security-report.html