1. Introduction to OpenClaw

OpenClaw, formerly known as Moltbot and Clawdbot, is an open source Agentic AI Assistant (AI Assistant). It is designed as a highly scalable automation engine that understands natural language commands and autonomously performs a range of complex tasks, such as triggering workflows, interacting with various online services, and maneuvering between devices. Because of its ability to interact directly "with its hands" with the digital world, security research organization Backslash Security has imaginatively described it as "AI With Hands".

OpenClaw's core architecture gives it powerful autonomous decision-making and execution capabilities. It not only parses user intent, but also dynamically plans and executes task steps, blurring the clear line between user intent and machine execution in traditional software. This capability has led to its rapid popularity among the developer and advanced user communities, and it is used in a wide range of scenarios, from personal assistants to complex enterprise automation.

Along with the rise of OpenClaw, a unique ecosystem has emerged that consists of two main components:

ClawHub: This is the official Skill Marketplace where users can post or download "skills" that extend the functionality of OpenClaw. These skills are similar to browser plug-ins or mobile apps that enable AI intelligences to gain new capabilities, such as controlling smart home devices, managing personal finances, or integrating with specific enterprise software. This open model greatly enriches OpenClaw's application scenarios, but it also provides a breeding ground for the spread of malicious code.

Moltbook: This is a social networking platform tightly integrated with OpenClaw. On this platform, autonomous AI intelligences built on OpenClaw can communicate, post content and interact with each other in Reddit-like forums, just like human users. While the concept is highly innovative, it also raises deep concerns about how AI intelligences can safely interact with each other and how to prevent the spread of malicious information .

The following table summarizes the key components of OpenClaw and its ecosystem and their functions.

| assemblies | typology | Functional Description |

| OpenClaw | AI Intelligent Body Framework | Understand natural language, autonomously plan and perform tasks, and interact with the digital world. |

| ClawHub | Skills market | Provides downloadable skills for extending the functionality of OpenClaw. |

| Moltbook | AI social networks | Allows OpenClaw-based AI intelligences to socially interact and exchange information. |

This ecological model, which integrates a core framework, a skills marketplace, and a social network, makes OpenClaw not only a tool, but also evolves into a complex technology platform with social attributes. However, it is this complexity and openness that exposes it to unprecedented security risks.

2. OpenClaw and ecological risks

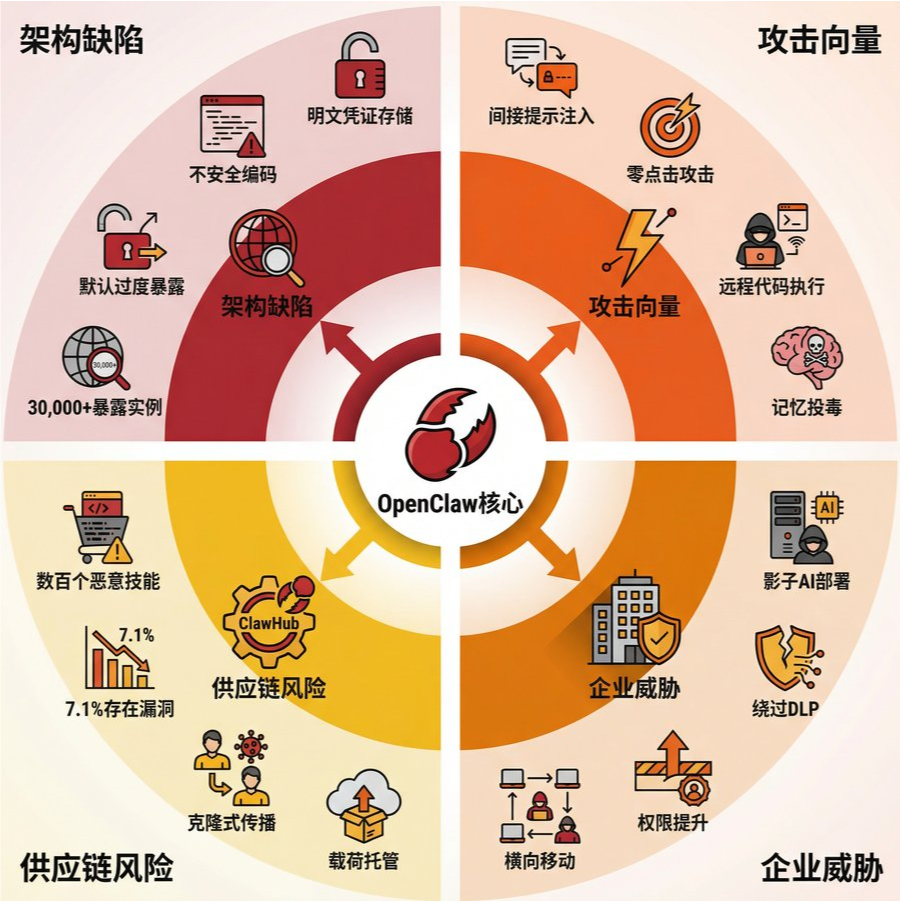

While OpenClaw's powerful features and open ecosystem bring convenience, they also introduce multi-layered and high-dimensional security risks. These risks not only stem from the design flaws of its own architecture, but are also amplified in its ecosystem, especially in the ClawHub skills market, creating a complex attack surface. In addition, the phenomenon of "shadow deployments" in enterprise environments poses new governance challenges.

2.1 Architecture-level security flaws

From the perspective of the underlying architecture, OpenClaw suffers from several serious design and implementation flaws that provide opportunities for attackers to exploit:

Poor credential management: Multiple security reports have pointed out that OpenClaw stored sensitive credentials such as API keys and session tokens in plaintext in its early versions. This practice made it easy for an attacker to steal a user's entire digital identity and take control of all the online services associated with them once they gained access to the file system.

Insecure coding practices: code audits have revealed a pattern in OpenClaw of using dangerous functions such as eval directly to process user input. This insecure coding practice opens the door for code injection attacks, allowing attackers to run arbitrary code in the execution environment of the AI intelligences.

Default overexposure: OpenClaw's gateway is bound to 0.0.0.0:18789 by default, which means that its full API is exposed to all network interfaces of the host. According to Censys, as of February 8, 2026, there are more than 30,000 instances of OpenClaw globally accessible from the public Internet . While most instances require authentication tokens to interact, this wide exposure by default greatly increases the attack surface, making misconfigured or vulnerable instances easy targets.

Permission Model and Sandboxing Mechanism: HiddenLayer researchers have criticized that OpenClaw relies too much on its configured language model (LLM) for security decisions and its default permission model is too lax . Unless a user actively configures and enables Docker-based tool sandboxing capabilities, AI intelligences will run with full system-level access. This is contrary to the principle of Least Privilege, which is the cornerstone of modern software security.

2.2 Complex Attack Vectors and Threat Scenarios

Based on the above architectural flaws, researchers have demonstrated and reported a variety of sophisticated attack vectors against OpenClaw:

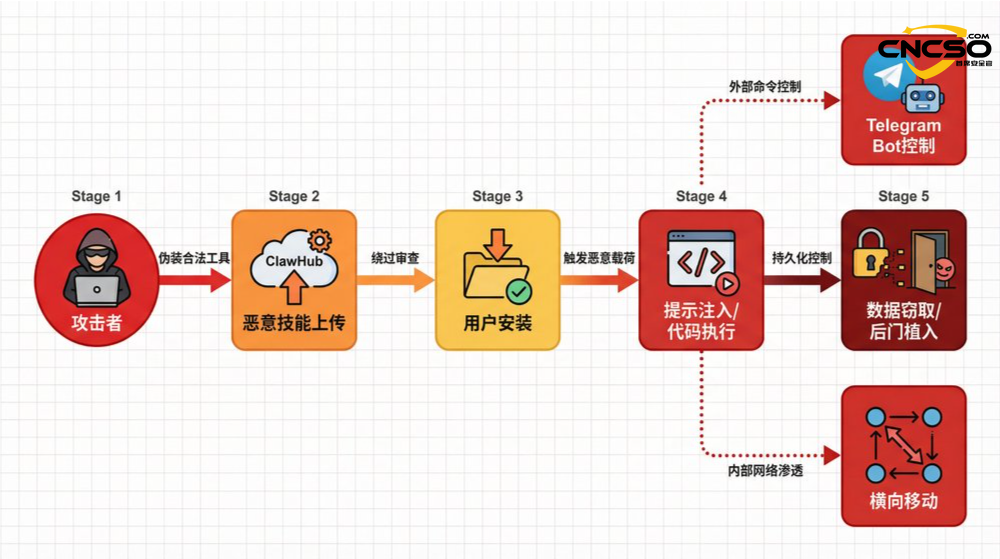

Indirect Prompt Injection: An attacker can embed malicious instructions into untrusted content (e.g., web pages, documents, or messages) that the AI intelligence will process. When the AI is asked to summarize or analyze this content, it will inadvertently execute these malicious instructions. A typical case is that a hint injection payload embedded in a web page can induce OpenClaw to append the attacker's instructions to its internal heartbeat file, thus enabling persistent control .

Zero-Click Attack: To make things even more complicated, an attacker can build a chain of "zero-click" attacks. When the victim's AI intelligence automatically processes a seemingly innocuous document, a hidden hint injection payload is triggered, which implants a backdoor into the victim's endpoint, allowing the attacker to take control remotely through external channels such as Telegram.

One-Click Remote Code Execution (One-Click RCE): A patched vulnerability allows an attacker to trick a user into clicking on a malicious link, exploiting the WebSocket channel of the gateway's control interface to reveal OpenClaw's authentication tokens and ultimately enable arbitrary code execution on the host.

Lateral movement within the ecosystem: on the Moltbook platform, attackers can leverage the platform's social mechanisms to amplify the spread of malicious content, directing other AI intelligences to visit malicious posts containing hint injections to manipulate their behavior and steal data or cryptocurrency. A misconfigured Supabase database even led to the compromise of 1.5 million API authentication tokens and 35,000 email addresses, facilitating a large-scale attack .

2.3 Supply Chain Risks in the ClawHub Skills Market

ClawHub, the official skills marketplace of OpenClaw, is the centerpiece of its ecosystem, but it has also become the hardest hit by AI supply chain attacks. Its risk profile is as follows:

| Risk dimension | explicit description |

| Proliferation of malicious skills | Security researchers have discovered hundreds of malicious skills on ClawHub that masquerade as legitimate tools but actually perform malicious activities such as data theft, backdoor implantation or malware installation . |

| High percentage of security deficiencies | An analysis of nearly 4,000 skills showed that about 7.1% of skills had serious security vulnerabilities, such as exposing sensitive credentials in plaintext in LLM's context window or output logs . |

| Malicious activity on a large scale | Bitdefender's report reveals that attackers often clone legitimate skills, redistribute them through tiny name variants, and use Pastebin services (such as glot.io) or public GitHub repositories to host their malicious payloads, creating a pattern of attack at scale . |

The risks in this open market model, as security researcher Ian Ahl points out, go far beyond the traditional browser extension market. Because AI intelligences are granted credentials to access a user's entire digital life, a single malicious AI skill could jeopardize all the systems that intelligence has access to, with a much larger potential radius of damage .

2.4 Corporate governance challenges posed by "shadow AI"

Due to the power and ease of use of OpenClaw, employees may deploy and use it within an organization's network without the approval of IT or security departments, a phenomenon known as "Shadow AI." This poses a serious governance challenge for organizations:

Bypassing traditional security controls: these unauthorized AI intelligences typically have elevated system privileges and are able to perform file access, data transfers, and network connections, completely bypassing an organization's data loss prevention (DLP), security agents, and endpoint monitoring systems.

Creating new attack entry points: As SOCRadar's CISO Ensar Seker puts it, "Misconfigurations become the primary attack surface when intelligence platforms go viral faster than security practices mature". A "shadow" AI intelligence that is exposed to the public network or configured with overly permissive privileges can be a springboard for attackers to penetrate an organization's internal network.

To summarize, the security risk faced by OpenClaw and its ecosystem is systematic and multidimensional. It not only exposes the shortcomings of AI intelligent body technology in security design, but also reveals the brand-new challenges faced by AI supply chain security and governance in open ecology and enterprise application scenarios. It is in this context that OpenClaw decided to introduce external security capabilities with a view to mitigating the increasingly severe threat landscape.

3. OpenClaw integrated VirusTotal engine program

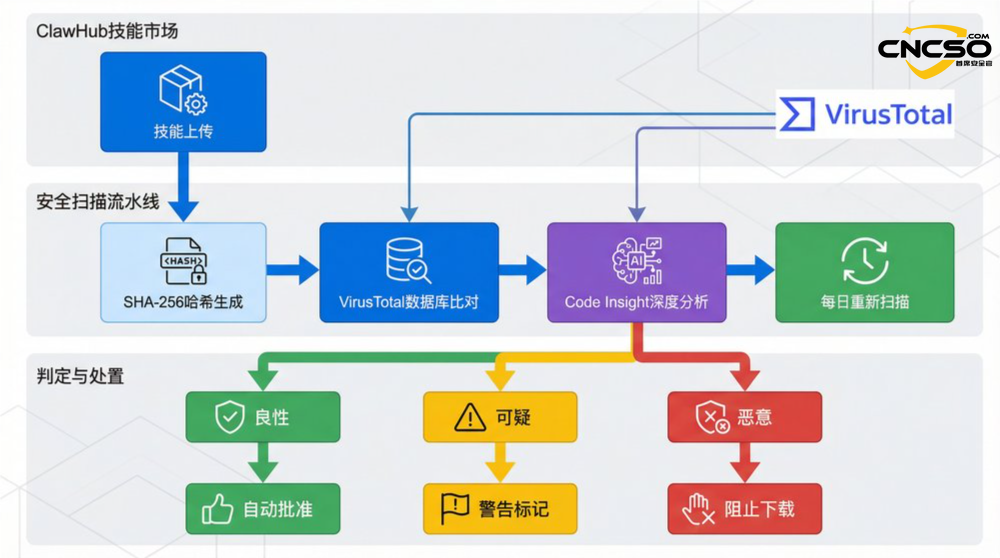

In the face of growing security threats and widespread community concern about security, the OpenClaw project side has taken a key initiative: partnering with VirusTotal, a well-known threat intelligence platform owned by Google, to conduct mandatory security scans of all skills uploaded to ClawHub. This program is designed to add a critical line of security defense to the OpenClaw ecosystem against the spread of malicious skills.

3.1 Technical realization process

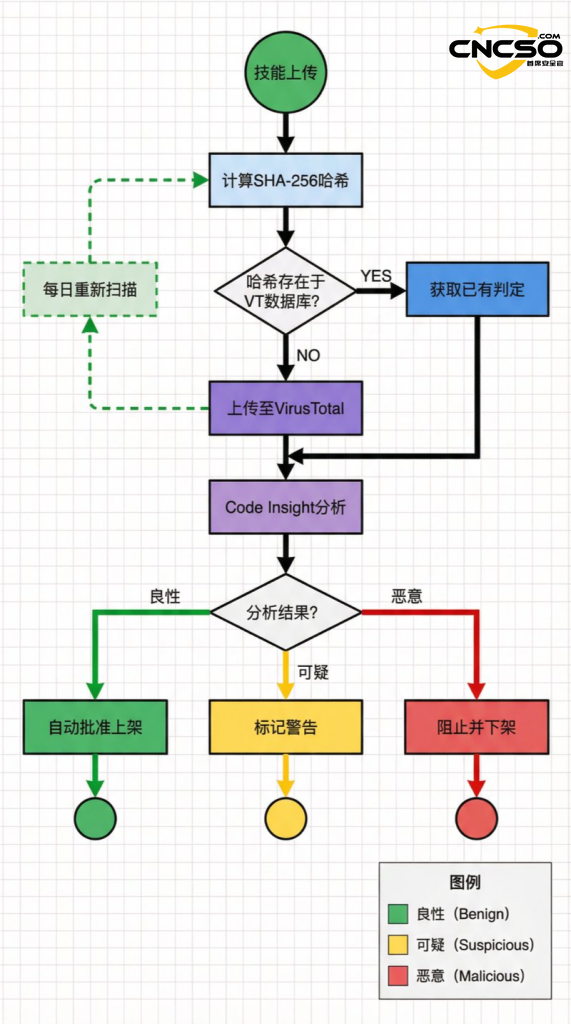

The core of this integrated solution lies in the establishment of an automated, multi-layered malicious code detection pipeline. Its technical realization process can be decomposed into the following key steps:

1. Unique Hash Generation: When a new skill kit is uploaded to ClawHub, the system will first calculate a unique SHA-256 hash value for it. This hash value serves as the digital fingerprint of the skill package for subsequent identification and comparison.

2. Existing Intelligence Database Comparison: The system cross-references the generated SHA-256 hash value with VirusTotal's huge database of malware samples. If the hash value already exists in the database and is marked as malicious, the system can quickly make a judgment without the need for more in-depth analysis.

3. Deep code analysis: If no matching hash value is found in VirusTotal's database, this usually means that it is a brand new or modified file. At this point, the system will upload the complete skillkit to the VirusTotal platform and invoke its latest Code Insight feature for in-depth analysis. VirusTotal Code Insight utilizes a large-scale language model to analyze code behavior and is able to identify potentially malicious intent, which is particularly important for detecting novel or obfuscated malicious code that is difficult to detect with traditional signature-based engines.

4. Continuous Monitoring and Rescanning: Security threats are dynamic, and a skill that seems harmless today may be found to be vulnerable or implanted with malicious code tomorrow, depending on external resources or codebases. In order to cope with this situation, OpenClaw's program also includes a continuous monitoring mechanism, i.e., daily re-scanning of all active skills in the ClawHub inventory to ensure that those "white-to-black" skills can be found and disposed of in a timely manner.

3.2 Automated determination and disposition mechanisms

Based on the results of VirusTotal scans, ClawHub has established an automated determination and disposition workflow to ensure differentiated management strategies for skills at different risk levels:

| VirusTotal Code Insight Decision Results | ClawHub disposal measures | User Side Experience |

| Benign | Automatically approve the skill for ClawHub. | Users can search, download and install the skill normally. |

| Suspicious | Mark obvious warning messages on the skills page. | Users will still be able to download it, but will be clearly informed that the skill is potentially risky and that they need to bear the consequences. |

| Malicious | Immediately block the download of the skill and take it off the market. | Users will not be able to search for or download the skill. |

3.3 Limitations and necessary supporting measures

Despite the integration of the powerful VirusTotal engine, OpenClaw's maintainers realize that this is not a "silver bullet" for all security problems. The solution still has its inherent limitations, not the least of which is that it may not be able to do anything about cleverly hidden hint injection payloads. Because hint injection attacks exploit the parsing and execution logic of the language model rather than traditional code vulnerabilities, they may be difficult to detect with pure code analysis tools.

Therefore, in addition to integrating VirusTotal, the OpenClaw project team has planned a series of important supporting security measures to build a more comprehensive security system. These measures include:

-Release comprehensive threat models: detailed threat models are made publicly available to the community to help developers and users understand potential risk points.

-Develop a public safety roadmap: clarify plans and priorities for future safety function development and increase transparency.

-Establish a formalized security reporting process: Provide security researchers with an open channel for vulnerability and risk reporting.

-Conduct a comprehensive codebase security audit: Invite a third-party security firm to conduct an in-depth audit of OpenClaw's entire code.

-Strengthened community monitoring mechanism: added a user reporting function on ClawHub, allowing logged-in users to flag and report suspicious skills, utilizing the power of the community to jointly monitor ecological safety.

All in all, OpenClaw's integration of VirusTotal is a significant step forward for the AI intelligences ecosystem in addressing supply chain security challenges. It not only provides a concrete example of technical implementation, but more importantly, it reveals a deeper understanding that a single technical solution cannot eradicate all risks, and must be complemented by a transparent governance strategy, well-established processes, and extensive community engagement in order to build a relatively safe and trustworthy AI ecosystem.

4. Conclusion and outlook

As a pioneer in the field of open source AI intelligences, OpenClaw's development trajectory vividly demonstrates the "growing pains" that inevitably accompany the popularization of cutting-edge technologies. Its powerful autonomy, open ecology and viral propagation speed together constitute a complex security challenge, the core of which lies in the fact that the greater the capability of the intelligence, the greater the radius of damage caused by its misuse. From explicitly stored credentials to excessive network exposure by default to rampant supply chain attacks on malicious skills, the risks faced by OpenClaw epitomize the problems of "shadow IT" and supply chain security in the age of AI.

In this context, OpenClaw's move to integrate the VirusTotal engine can be seen as an important practice in AI ecosystem security governance. The program establishes a fundamental security barrier for the skills market through automated scanning and a multi-level disposal mechanism, effectively raising the bar for attackers. However, as the project acknowledges, technical scanning is not a panacea, especially in the face of advanced threats such as prompt injection. This highlights the importance of theAI securitydomain, purely technical defenses must be combined with a broader governance strategy.

Looking ahead, the security construction of the AI intelligentsia ecosystem needs to be systematically advanced in the following aspects:

1. Shift Left: Deeply integrate security design principles into the initial development phase of the AI intelligence framework. This includes mandatory sandboxing mechanisms, strict privilege control, secure credential management, and filtering and sanitization of untrustworthy inputs.

2. Strengthening supply chain security: Drawing on the experience of traditional software supply chain security, establish stricter access, vetting and traceability mechanisms for the AI skills market. For example, introduce measures such as developer authentication, code signing, and dependency vulnerability scanning.

3. Developing AI-native detection and defense techniques: In response to AI-specific attack vectors such as hint injection and model poisoning, new, specialized detection and defense techniques need to be developed. This may involve real-time monitoring of model inputs and outputs, anomaly detection of model behavior, and the use of AI to counter the "red and blue confrontation" mechanism of AI.

4. Establish Transparent Governance and Community Collaboration: As planned by OpenClaw, publishing threat models, publicizing security roadmaps, establishing a smooth vulnerability reporting channel, and encouraging community participation in oversight are key to building trust and working together to defend against threats.

Ultimately, the case of OpenClaw tells us that the security of AI intelligences is not just a technical issue, but also an ecological governance issue. It requires that while we embrace technological innovation, we must pay equal or even higher attention to building a matching security framework, governance system and community culture. Only in this way can we ensure that these powerful AI tools truly serve the well-being of mankind, rather than being reduced to a sharp edge in the hands of attackers.

5. Reference citations

[3] Bustan, M. S. T., & Zadok, N. (2026 ). OpenClaw Security Analysis. oX Security Research.

[4] Censys. (2026, February 8). Internet-Exposed OpenClaw Instances. censys Data.

[7] AI Security Community. (2026). OpenClaw One-Click Remote Code Execution Vulnerability.

Original article by Chief Security Officer, if reproduced, please credit https://www.cncso.com/en/openclaw-integrates-virustotal-engine-scanning.html