Generative from 2023AI(Since the explosion of Generative AI, the technological evolution of Large Language Models (LLMs) has crossed from simple text completion and dialog to Agentic AI with complex logical reasoning capabilities. By 2026, LLMs, represented by Anthropic's Claude family and OpenAI's new generation of models, have demonstrated significant advantages in code understanding, contextualization, and long-range task planning.

Intelligent Body Safety(Agentic AI Security): refers to the security, controllability, and challenges to existing security defenses when AI entities with autonomous decision-making and tool invocation capabilities are performing tasks.

Currently, big models are no longer limited to static knowledge retrieval, but are able to deeply understand complex software architecture and business logic. This shift from “probabilistic prediction” to “in-depth reasoning” provides a new technological base for solving the long-standing Zero-click Vulnerability (Zero-click Vulnerability) and complex Remote Code Execution (RCE) path analysis in the field of network security. Path analysis provides a new technical base.

Big Models in Security: From Aids to the Core of Defense

In network security practice, the application of large models is undergoing a transformation from “security assistant” to “security core”. The traditional security defense system relies heavily on feature code matching and static rules, which is not enough to cope with the rapid iteration of polymorphic malware and complex logic of zero-day vulnerability (Zero-day Vulnerability).

| application dimension | traditional model | Large model-driven model |

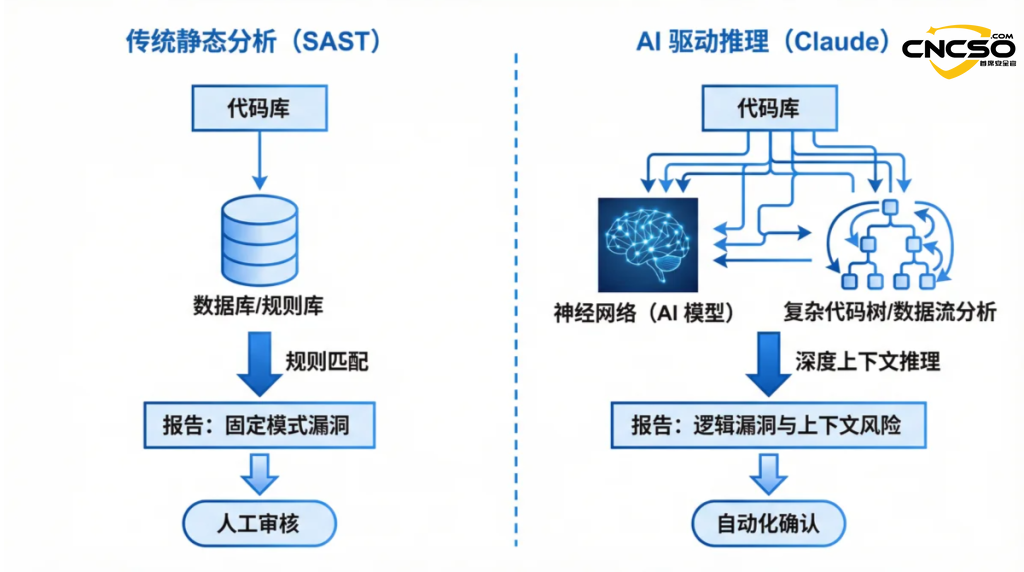

| vulnerability testing | Rule-based static analysis (SAST) | Deep auditing based on contextual reasoning |

| Threat intelligence | Manual collection and structured processing | Automated Multi-source Intelligence Fusion and Research |

| Code Fixes | Developers write patches manually | AI automatically generates and validates fix recommendations |

| Attack and defense drill | Penetration testing of preset scripts | Autonomous Intelligence Body-Driven Red-Blue Confrontation |

The large model is able to identify subtle logic flaws in the code by learning from the massive code base and historical vulnerability data. For example, when dealing with non-standard authentication processes or complex memory management logic, AI is able to simulate an attacker's thought path and discover deep hidden dangers that are hard to reach with traditional tools.

Claude Code Security Program: RemodelingSoftware Supply Chain Security

On February 20, 2026, Anthropic officially released Claude Code Security, a deep code security analysis solution integrated into Claude Enterprise and Team editions. Unlike traditional static application security testing (SAST) tools, the core of the solution is its native reasoning capabilities.

Instead of relying on a library of predefined vulnerability signatures, Claude Code Security enables vulnerability discovery through the following core mechanisms:

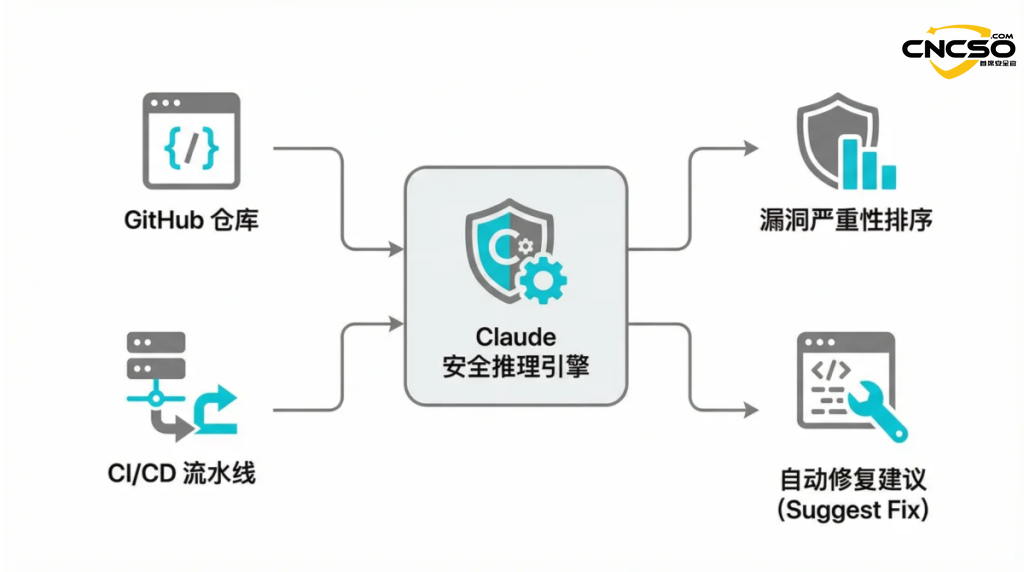

Architectural Mapping:Automatically builds the topology of interactions between application components and identifies potential attack surfaces across modules.

Data Flow Tracking (DFT):Real-time analysis of the flow path of user inputs within the program, accurately identifying dangerous input points that have not been desensitized.

Closed-loop Remediation (CLR):After identifying a vulnerability, the model not only provides a detailed natural language explanation, but also supports the automatic generation of patches through the “one-click fix” button.

The solution now supports integration with leading code hosting platforms such as GitHub, and is designed to push the boundaries of Shift-left Security, enabling developers to get expert security audit feedback as early as the coding stage.

Industry Impact: Paradigm Shift in Traditional Security Markets

The launch of Claude Code Security sent shockwaves through the capital markets, with shares of traditional security giants such as CrowdStrike and Cloudflare falling by more than 8% on the day of the launch. This phenomenon reflects the market's concern aboutAI Native Security(AI-Native Security) subverts the deep expectations of the traditional protection model.

In terms of the industry landscape, Claude Code Security's impact is seen in three main dimensions:

Toolchain Integration:Traditional standalone scanning tools may be replaced by AI intelligences integrated into development environments, and security detection will become more inert and real-time.

The defense threshold is lowered:SMBs can leverage AI to enable defense against complex logic vulnerabilities such as Auth Bypass without having to hire expensive senior security experts.

Offensive and defensive scales reshaped:As AI automated remediation becomes more widespread, the window from discovery to exploitation will be dramatically shortened, forcing attackers to look for higher-order attacks.

However, AI-driven security solutions are not foolproof. Hallucination of models can lead to false positives, and the accuracy of AI's reasoning remains unproven for highly customized, closed architectures.

refer to

Cybersecurity stocks drop after Anthropic debuts Claude Code Security - SiliconANGLE, 2026.

Making frontier cybersecurity capabilities available to organizations - Anthropic Official Blog, 2026.

2026 AI Security Report: Trends and Predictions - Stellar Cyber, 2026.

Beyond Static Analysis: AI Powered Vulnerability Detection - Medium Engineering, 2025.

Original article by Chief Security Officer, if reproduced, please credit https://www.cncso.com/en/the-paradigm-shift-of-claude-code-security.html